As the political season continues to heat up, it is the perfect time to check out some of the most watched domain names in the US — the websites of major Democratic and Republican candidates for President of the United States. In the wake of the South Carolina Republican primary on February 20, we set up an automated Web browser to visit campaign sites every 30 minutes and to track the splash screens for campaign contributions, which urge visitors to join the team, family, movement, or revolution.

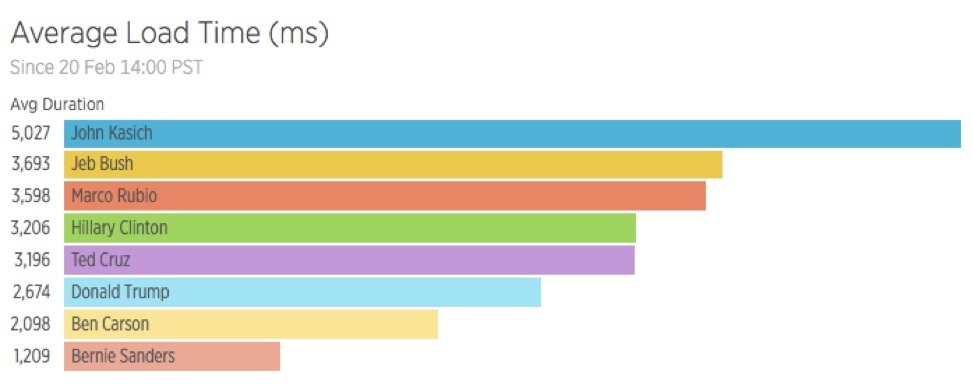

Political ideology does not seem to have any connection to overall page load time. The average duration of page load time, or the time it takes for a Google Chrome browser to completely load the landing page of each major campaign site, varies widely.

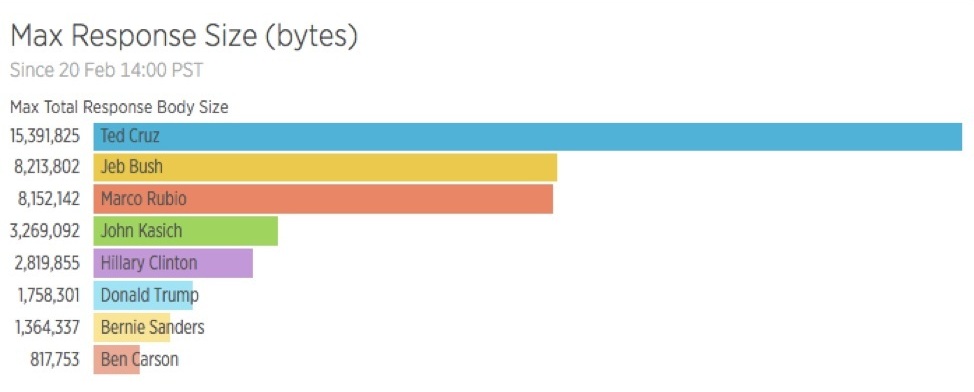

Not surprisingly, there’s evidence that the total size of the Web pages — the sum of all of the responses for images, fonts, HTML, CSS, and JavaScript — affects overall performance. In general, all the campaign sites were image-heavy. All those smiling-supporter photos come at a measurable cost.

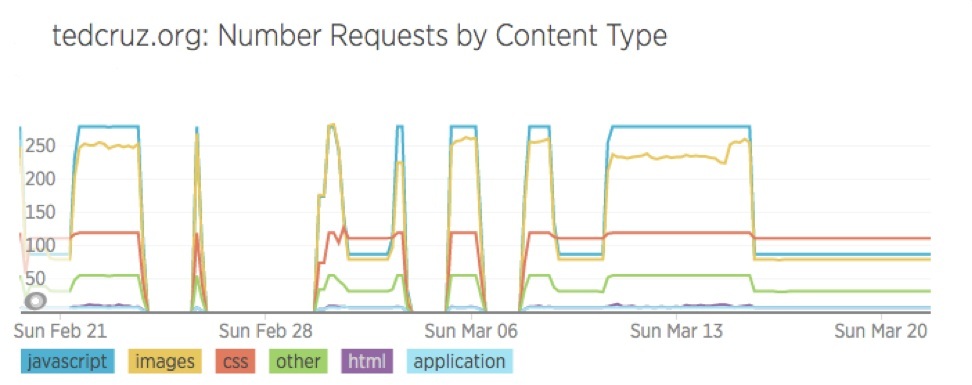

Looking at Ted Cruz’s site, for example, segmenting requests by different content types shows that the average load time seems to be most impacted by an increase in JavaScript, CSS, and images served from a single host.

With increasing focus on page weight, reducing response size and the number of requests is critical to improve overall load time performance.

Data suggests that the Clinton, Kasich and Trump campaigns are making less frequent changes to their sites than the Cruz and Sanders sites. Less changes can also reduce overall response time.

According to NPR, some $4.4 billion is expected to be spent on television advertising alone in this election cycle, and the Web is a crucial part of campaign financing. Milliseconds of page load slowdown can result in corresponding page abandonment and lost donations. Given the vast amounts of money being raised through political websites, it is surprising that site performance is as varied as the political positions of the candidates themselves.

For any website, including the ones for the next president of the United States, here are some best practices informed by the performance data that we’ve collected:

■ Understand how your Web pages are working in the real world. Data from simple Selenium scripts reveals a large amount of actionable information to improve the experience of visitors (and donors).

■ When in doubt, reduce Web page bloat. HTTP/2 helps with parallel requests and multiplexing over the same connection, but massive Web pages are slow no matter what.

■ Synthetic testing is a part of a much bigger idea: the power of having visibility into how an entire software stack is actually performing. Truly understanding all the reasons behind slow load times requires end-to-end visibility from the frontend to the backend.

Regardless of whether your websites are hosted in the cloud, at home, or in a state-of-the-art data center, professionals of all political persuasions should be using monitoring tools to build better applications and user experiences.

Methodology: Candidate website performance data was generated using the New Relic Synthetics API, a simple Node.js script and the open-source requests library.

Disclosure: This blog post does not represent the political views of New Relic and should not be taken as an endorsement of any candidate.

Clay Smith is a Developer Advocate at New Relic.

The Latest

Over the last 20 years Digital Employee Experience has become a necessity for companies committed to digital transformation and improving IT experiences. In fact, by 2025, more than 50% of IT organizations will use digital employee experience to prioritize and measure digital initiative success ...

While most companies are now deploying cloud-based technologies, the 2024 Secure Cloud Networking Field Report from Aviatrix found that there is a silent struggle to maximize value from those investments. Many of the challenges organizations have faced over the past several years have evolved, but continue today ...

In our latest research, Cisco's The App Attention Index 2023: Beware the Application Generation, 62% of consumers report their expectations for digital experiences are far higher than they were two years ago, and 64% state they are less forgiving of poor digital services than they were just 12 months ago ...

A vast majority (89%) of organizations have rapidly expanded their technology in the past few years and three quarters (76%) say it's brought with it increased "chaos" that they have to manage, according to Situation Report 2024: Managing Technology Chaos from Software AG ...

In 2024 the number one challenge facing IT teams is a lack of skilled workers, and many are turning to automation as an answer, according to IT Trends: 2024 Industry Report ...

Organizations are continuing to embrace multicloud environments and cloud-native architectures to enable rapid transformation and deliver secure innovation. However, despite the speed, scale, and agility enabled by these modern cloud ecosystems, organizations are struggling to manage the explosion of data they create, according to The state of observability 2024: Overcoming complexity through AI-driven analytics and automation strategies, a report from Dynatrace ...

Organizations recognize the value of observability, but only 10% of them are actually practicing full observability of their applications and infrastructure. This is among the key findings from the recently completed Logz.io 2024 Observability Pulse Survey and Report ...

Businesses must adopt a comprehensive Internet Performance Monitoring (IPM) strategy, says Enterprise Management Associates (EMA), a leading IT analyst research firm. This strategy is crucial to bridge the significant observability gap within today's complex IT infrastructures. The recommendation is particularly timely, given that 99% of enterprises are expanding their use of the Internet as a primary connectivity conduit while facing challenges due to the inefficiency of multiple, disjointed monitoring tools, according to Modern Enterprises Must Boost Observability with Internet Performance Monitoring, a new report from EMA and Catchpoint ...

Choosing the right approach is critical with cloud monitoring in hybrid environments. Otherwise, you may drive up costs with features you don’t need and risk diminishing the visibility of your on-premises IT ...