There was a time when consumers were so happy to have the power of a computer in their pockets that they’d put up with some usage flaws in exchange for information and entertainment on the go. But with higher costs of owning and using smartphones, and experiences enriched by 4G speeds, consumers have developed much higher performance expectations.

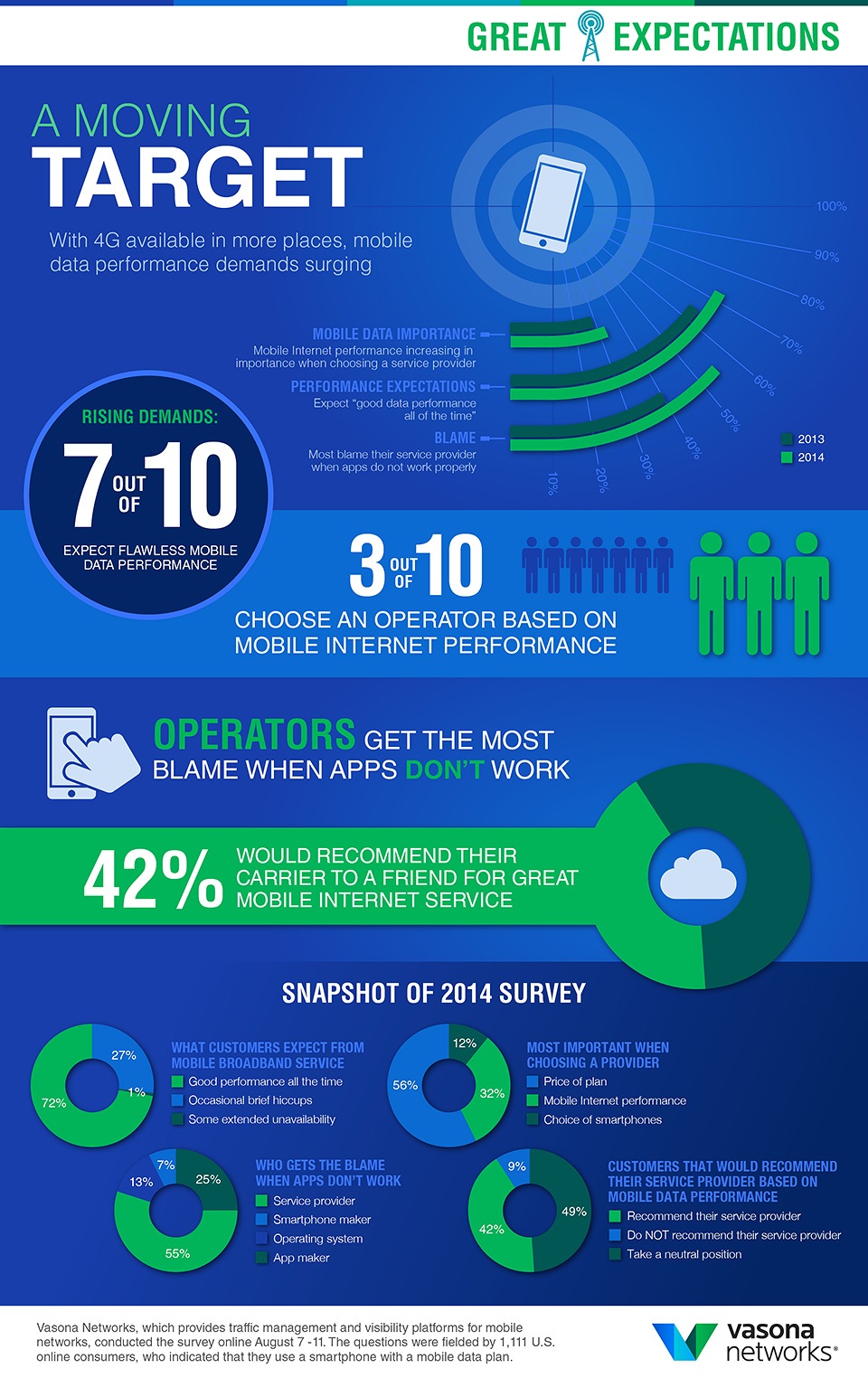

For the past two years, Vasona Networks has surveyed more than 1,000 smartphone owners about their mobile broadband performance expectations. This year, 72% of respondents said that they expect “good mobile data performance all of the time” with no hiccups or flaws. This is up 8% from the year before.

Even more striking is what we’ve learned about the increasing onus consumers put on their service providers to ensure great app experiences. In fact, the majority of consumers told us they hold their mobile operator most responsible when apps don’t function properly. This number is up to 55% from last year’s 40%, when app developers and operators were essentially tied for blame. This year, consumers that held the app developer most responsible dropped to 25%. In our most recent survey, the remaining 20% suspected either the device maker or operating system to be the cause of poor app performance. Considering recent operating system update struggles, perhaps there will be future increase in the blame placed there.

Regardless of where consumers place responsibility, delivering a great app experience is truly a shared burden across operators, technology providers and the developers of those apps.

On the app side, the developers that prioritize performance management work smartly to control the size of their apps, take advantage of the latest compression techniques, and give users control over how content is displayed depending on what type of network they’re connected to. These app developer strategies are well-covered by other authors on this site.

From our experience working with service providers, there are some exciting new techniques available for use in mobile networks that drive the best app experiences by smarter approaches to the RAN (Radio Access Network). Managing contending traffic that shares the cell air interface is a major area of focus. This is where bandwidth additions are most expensive, and, related to that, where congestion is most frequently encountered. Operators are finding better ways to address the diverse mixture of streaming media, web browsing and downloads that can cause severe congestion within cells.

Solutions like edge application controllers assess whether a cell faces congestion at any given moment, and understand which sessions are causing it and the experiences suffering the most as a result. Bandwidth is then reallocated based on application type and subscriber needs.

This is a leap beyond prior probe and DPI (Deep Packet Inspection) approaches that observe traffic patterns and congestion and then communicate through a policy control function to take enforcement action. But congestion and latency are transient phenomena that may last seconds or less. These small incidents can destroy app experiences and cause degradation with repercussions longer than the initial periods of congestion. In these cases, the information can be revealed too late by the probe and service experience is compromised before the DPI takes action.

The results of better approaches to RAN management are speaking for themselves. For instance, a US service provider using an edge application controller to manage the impact of congestion has achieved more than 30% improved bitrate performance for video and web browsing and more than 35% reduction in service latency during congestion. These numbers signify the difference between a great app experience and a frustrating one. Between a finger tapping happily on a screen or pointing angrily at the offending party.

As consumers stiffen their demands for mobile operators to assure flawless app experiences, the industry continues to move closer to that promise.

Click on the infographic below for a larger version.

John Reister is VP of Marketing and Product Management for Vasona Networks.