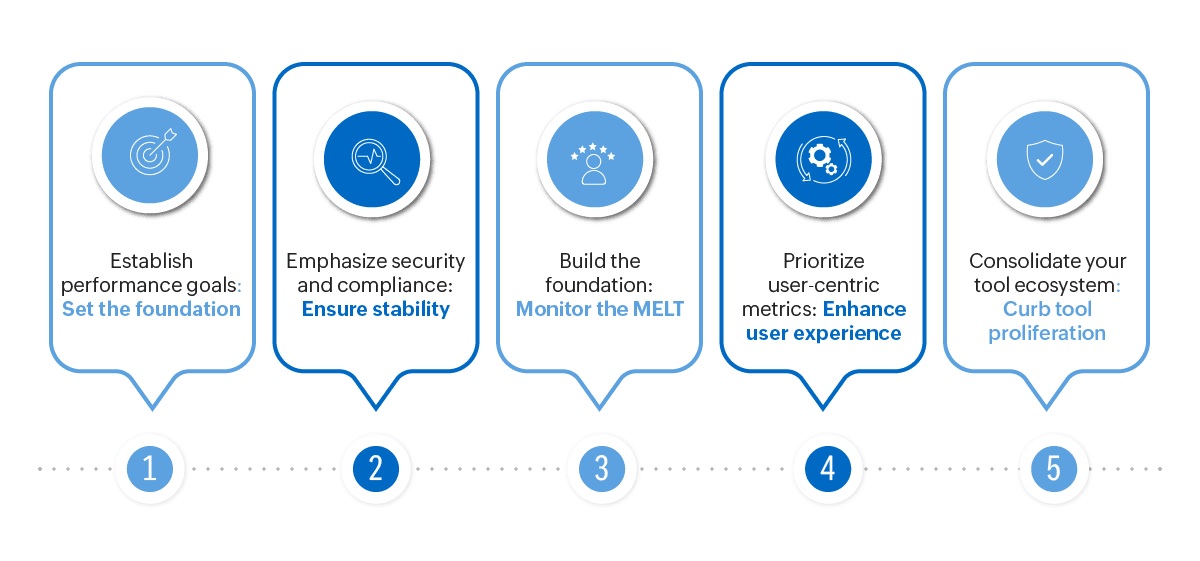

In today's digital era, monitoring and observability are indispensable in software and application development. Their efficacy lies in empowering developers to swiftly identify and address issues, enhance performance, and deliver flawless user experiences. Achieving these objectives requires meticulous planning, strategic implementation, and consistent ongoing maintenance. In this blog, we're sharing our five best practices to fortify your approach to application performance monitoring (APM) and observability.

1. Establish performance goals: Set the foundation

Monitoring your application's performance without clear targets is like driving without directions — it gets you nowhere. Establishing performance objectives fosters accountability. However, merely setting goals is just the beginning. A well-thought-out plan is imperative to achieve them, and this involves considering multiple elements: ■ User experience: It's important to know your users inside out, including who they are, and what bugs them. Identify the top issues users face, like slow loading times or frequent errors. Aim for tangible improvements that directly address these pain points, such as "reducing page load times by X%." Break down the user experience into key stages and set specific performance goals for each to ensure a smooth and seamless experience throughout. ■ Industry benchmarks: Imagine that your application has an error rate objective of 2%. Sounds good, right? But had you known that the industry benchmark for high performing applications was below 0.5%, you wouldn't be celebrating mediocrity. By incorporating industry benchmarks into your APM objectives, you gain a valuable perspective that helps you identify performance gaps and set more ambitious, yet realistic goals. ■ Collaboration and prioritization: Engage with key stakeholders, including developers, operations teams, and business representatives, to gather insights into user expectations and business requirements. Collaborative discussions will help identify critical performance metrics and ensure that goals are aligned with overall business objectives. ■ Organizational capacity: Your organization's capability covers the budget, human resources, tech setup, and overall operations. Evaluating this capacity is key to smart APM prioritization. Avoid spreading resources too thin, as overly ambitious goals might compromise stability and long-term success.

2. Build the foundation: Monitor the MELT

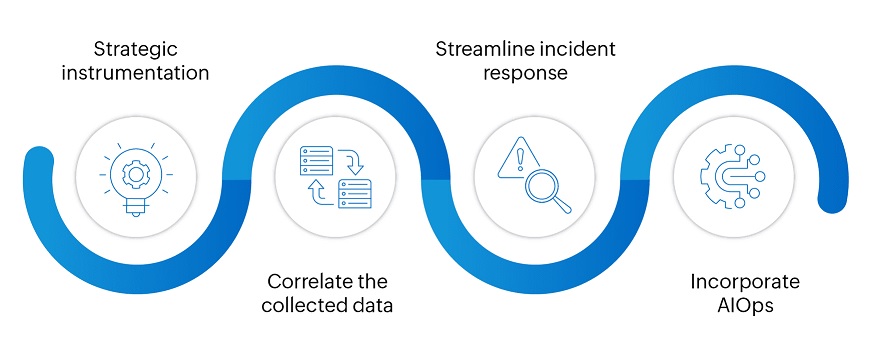

To ensure a solid foundation for application performance monitoring and observability, focus on MELT: Metrics, Events, Logs, and Traces. This approach enables organizations to gain comprehensive insights, identify anomalies, and troubleshoot issues efficiently. Monitoring MELT aspects forms the cornerstone for proactive performance management and optimization. How do you build upon this foundation?  Here are best practices that embrace the four essential components you should consider when implementing observability: ■ Strategic instrumentation: Don't bombard yourself with a data tsunami: identify key metrics, events, and traces relevant to your specific performance goals. For example, an e-commerce platform might prioritize shopping cart abandonment metrics and checkout page performance, while a social media platform might focus on content loading times and engagement events. Choose the metrics relevant to your objectives. ■ Correlate the collected data: Utilize platforms and dashboards that seamlessly integrate MELT elements. This facilitates cross-referencing and pattern identification, eliminating the need to switch between disparate data sources. Ensure that you always receive timely warnings of potential issues via alerts, triggers set on predefined thresholds, and correlation. Most importantly, use the correlated data to drill down to the root cause of anomalies and thoroughly understand the "why" before implementing fixes. ■ Streamline incident response: Implement thorough monitoring across all layers of the application stack, including infrastructure, networks, and code, ensuring all potential issues are captured. Set up automated alerts to notify the relevant teams when anomalies or issues are detected. Develop and maintain incident response playbooks that outline predefined steps for addressing common issues. Finally, conduct thorough post-incident analyses to identify root causes and areas for improvement. ■ Incorporate AIOps: When it comes to application observability, AIOps is designed to help ingest, analyze, and correlate the vast amount of data generated. When organizations blindly implement AIOps without a clear vision and strategic framework, they risk encountering a range of challenges. Uninformed AIOps adoption may result in misaligned priorities, inefficient resource utilization, and a failure to realize its full potential. Before jumping on to the AIOps bandwagon, define specific goals for your AIOps implementation: Do you want to automate anomaly detection, remediation, or performance prediction? Once you set your target, you can continually monitor the performance of your AI model, validate the accuracy of their predictions, tweak and optimize for more accurate insights and effective actions.

Here are best practices that embrace the four essential components you should consider when implementing observability: ■ Strategic instrumentation: Don't bombard yourself with a data tsunami: identify key metrics, events, and traces relevant to your specific performance goals. For example, an e-commerce platform might prioritize shopping cart abandonment metrics and checkout page performance, while a social media platform might focus on content loading times and engagement events. Choose the metrics relevant to your objectives. ■ Correlate the collected data: Utilize platforms and dashboards that seamlessly integrate MELT elements. This facilitates cross-referencing and pattern identification, eliminating the need to switch between disparate data sources. Ensure that you always receive timely warnings of potential issues via alerts, triggers set on predefined thresholds, and correlation. Most importantly, use the correlated data to drill down to the root cause of anomalies and thoroughly understand the "why" before implementing fixes. ■ Streamline incident response: Implement thorough monitoring across all layers of the application stack, including infrastructure, networks, and code, ensuring all potential issues are captured. Set up automated alerts to notify the relevant teams when anomalies or issues are detected. Develop and maintain incident response playbooks that outline predefined steps for addressing common issues. Finally, conduct thorough post-incident analyses to identify root causes and areas for improvement. ■ Incorporate AIOps: When it comes to application observability, AIOps is designed to help ingest, analyze, and correlate the vast amount of data generated. When organizations blindly implement AIOps without a clear vision and strategic framework, they risk encountering a range of challenges. Uninformed AIOps adoption may result in misaligned priorities, inefficient resource utilization, and a failure to realize its full potential. Before jumping on to the AIOps bandwagon, define specific goals for your AIOps implementation: Do you want to automate anomaly detection, remediation, or performance prediction? Once you set your target, you can continually monitor the performance of your AI model, validate the accuracy of their predictions, tweak and optimize for more accurate insights and effective actions.

3. Prioritize user-centric metrics: Enhance user experience

Businesses often grapple with understanding if the much-anticipated "aha moment" in their applications truly brings delight or frustration to users. While server-side metrics provide valuable insights, they only reveal part of the narrative. To truly understand your application's performance, you need to see it from the user's perspective. For example, say you are launching a new application and the Apdex score, which measures the satisfaction level of the end user, suddenly falls from a good 0.9 to an average 0.75. This could mean a lot of things: your users might be having trouble accessing the application across geographies or with specific transactions. A robust end-user experience strategy will enable you to discern whether the problem stems from sluggish load times following a new feature deployment or concurrent user sessions. Consider implementing these three best practices: ■ Set up synthetic transaction monitoring: Create synthetic scenarios that mimic real user actions to identify potential problems. Incorporate variations in user paths, session lengths, and interactions to simulate diverse engagement. Test your application's performance across multiple locations globally. ■ Monitor real user metrics: Employ a comprehensive approach to real user monitoring, capturing metrics like page load times, rendering performance, transaction success rates, and error rates. Prioritize optimization efforts for critical paths that significantly influence user satisfaction and business objectives. ■ Embrace an integrated approach: Contextually link back-end infrastructure metrics with front-end performance to gain a holistic perspective. Create an ongoing feedback loop between your back-end and front-end development teams, encouraging collaboration and the exchange of insights. Through this iterative process, teams can work together cohesively to tackle performance challenges, optimize the application, and uphold a consistently smooth user experience.

4. Consolidate your tool ecosystem: Curb tool proliferation

In the early stages of managing application performance, it's often tempting to adopt various tools to address specific needs, leading to a proliferation of tools. While this might seem easy to manage at first, it becomes challenging when tools pile up and bombard you with notifications. Imagine having a separate tool for monitoring your database, generating about 80 alerts daily. Amidst this deluge, even valid alerts can get lost without proper context, hindering visibility and impacting the issue's significance in the broader context. When specialized tools are deployed, each tackles specific facets of an issue and often operates independently, which leads to fragmented insights. This complicates operations and can escalate costs without improving performance. The remedy is to move away from a "tool for every issue" mentality and embrace a resource that unifies operations and provides functional and beneficial insights. Minimizing tool sprawl delivers significant benefits by consolidating insights, simplifying processes, and fostering a more streamlined monitoring approach. But replacing all of them at once — say, the 40 separate tools that might be used in the organization — is often impractical. An efficient APM solution can replace a subset while seamlessly integrating with others.

5. Emphasize security and compliance: Ensure stability

Did you know that the attack surface of applications is ever increasing with more than over 29,000 new vulnerabilities identified in the last year alone? What's interesting is that not all of these vulnerabilities were introduced during the coding process; a good chunk of them were passed from application components like libraries or frameworks. This calls for a paradigm shift. It's time to move beyond traditional uptime and resource metrics and embrace a holistic approach that integrates security and compliance. How can you guarantee the long-term sustainability of your application security and compliance practices? Here are some best practices: ■ Prioritize what matters the most: While dealing with a growing number of application vulnerabilities, organizations should focus on prioritizing the most critical issues. Relying solely on the Common Vulnerability Scoring System (CVSS) doesn't provide a comprehensive view. Utilizing runtime context enables a deeper understanding, allowing organizations to assess whether their hosting service is actively under attack and adjust CVSS scores to better reflect the actual severity of each vulnerability. ■ Implement strict access controls: Uphold stringent access controls, ensuring access is granted solely to authorized users and data. Ensure that your APM tool enables you to regularly review and update access permission in alignment with your organizational roles and responsibilities. ■ Manage your containers: As organizations integrate containerization into their software deployment strategy, maintaining security is paramount. To safeguard against vulnerabilities, it's critical to conduct automated scans for both proprietary and open-source vulnerabilities throughout the entire CI/CD pipeline. ■ Adhering to regulatory standards: It's crucial to identify the regulations relevant to your business, considering factors like location, industry, and the types of data you manage. Be it HIPAA, PCI-DSS, or the GDPR, it is wise to conduct routine audits and assessments to ensure your application consistently complies with the required standards. Establish a successful application performance monitoring strategy for your organization with ManageEngine Applications Manager. This tool helps you define and track performance goals, ensuring your applications consistently meet predefined benchmarks. Interested in learning more about Applications Manager? Schedule a free personalized demowith one of our solution experts today, or explore on your own through a 30-day free trial.