Mezmo unveiled its Observability Pipeline, which enables teams to control, enrich, and correlate machine data for actionable insights and faster decisions.

Mezmo's Observability Pipeline helps organizations better control their observability data and deliver increasing business value. It centralizes the flow of data from various sources, adds context to make data more valuable, and then routes it to destinations to drive actionability.

“Data provides a competitive advantage, but organizations struggle to extract real value. First-generation observability data pipelines focus primarily on data movement and control, reducing the amount of data collected, but fall short on delivering value. Preprocessing data is a great first step,” said Tucker Callaway, CEO, Mezmo. “We’ve built on that foundation and our success in making log data actionable to create a smart observability data pipeline that enriches and correlates high volumes of data in motion to provide additional context and drive action.”

Mezmo’s Observability Pipeline provides access and control to ensure that the right data is flowing into the right systems in the right format for analysis, minimizing costs and enabling new workflows. This smart pipeline integrates Mezmo’s best-in-class log analysis features, including search, alerting, and visualization capabilities, to augment and analyze data in motion, delivering intelligent, actionable insights to mitigate risk and make decisions faster.

The flexible, easy-to-use solution enriches workflows, streamlines the adoption of best practices, and enables new observability data use cases. Customers can route data from any source, such as cloud platforms, Fluentd, Logstash, Syslog, and others, to many destinations for various use cases, including Splunk, S3, and Mezmo’s Log Analysis platform.

Support for OpenTelemetry further helps simplify the ingestion of data and makes data more actionable with enrichment of the OpenTelemetry attributes.

Mezmo also helps transform sensitive data to meet regulatory and compliance requirements, such as PII. Control features simplify the management of multiple sources and destinations while protecting against runaway data flow.

The Latest

According to Auvik's 2025 IT Trends Report, 60% of IT professionals feel at least moderately burned out on the job, with 43% stating that their workload is contributing to work stress. At the same time, many IT professionals are naming AI and machine learning as key areas they'd most like to upskill ...

Businesses that face downtime or outages risk financial and reputational damage, as well as reducing partner, shareholder, and customer trust. One of the major challenges that enterprises face is implementing a robust business continuity plan. What's the solution? The answer may lie in disaster recovery tactics such as truly immutable storage and regular disaster recovery testing ...

IT spending is expected to jump nearly 10% in 2025, and organizations are now facing pressure to manage costs without slowing down critical functions like observability. To meet the challenge, leaders are turning to smarter, more cost effective business strategies. Enter stage right: OpenTelemetry, the missing piece of the puzzle that is no longer just an option but rather a strategic advantage ...

Amidst the threat of cyberhacks and data breaches, companies install several security measures to keep their business safely afloat. These measures aim to protect businesses, employees, and crucial data. Yet, employees perceive them as burdensome. Frustrated with complex logins, slow access, and constant security checks, workers decide to completely bypass all security set-ups ...

In MEAN TIME TO INSIGHT Episode 13, Shamus McGillicuddy, VP of Research, Network Infrastructure and Operations, at EMA discusses hybrid multi-cloud networking strategy ...

In high-traffic environments, the sheer volume and unpredictable nature of network incidents can quickly overwhelm even the most skilled teams, hindering their ability to react swiftly and effectively, potentially impacting service availability and overall business performance. This is where closed-loop remediation comes into the picture: an IT management concept designed to address the escalating complexity of modern networks ...

In 2025, enterprise workflows are undergoing a seismic shift. Propelled by breakthroughs in generative AI (GenAI), large language models (LLMs), and natural language processing (NLP), a new paradigm is emerging — agentic AI. This technology is not just automating tasks; it's reimagining how organizations make decisions, engage customers, and operate at scale ...

In the early days of the cloud revolution, business leaders perceived cloud services as a means of sidelining IT organizations. IT was too slow, too expensive, or incapable of supporting new technologies. With a team of developers, line of business managers could deploy new applications and services in the cloud. IT has been fighting to retake control ever since. Today, IT is back in the driver's seat, according to new research by Enterprise Management Associates (EMA) ...

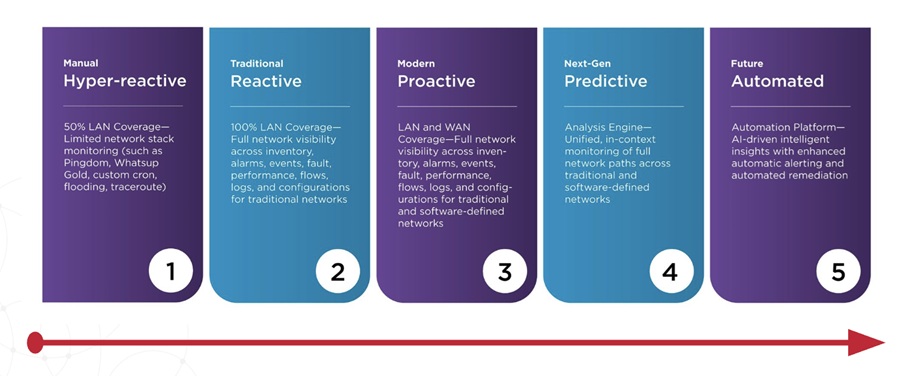

In today's fast-paced and increasingly complex network environments, Network Operations Centers (NOCs) are the backbone of ensuring continuous uptime, smooth service delivery, and rapid issue resolution. However, the challenges faced by NOC teams are only growing. In a recent study, 78% state network complexity has grown significantly over the last few years while 84% regularly learn about network issues from users. It is imperative we adopt a new approach to managing today's network experiences ...

From growing reliance on FinOps teams to the increasing attention on artificial intelligence (AI), and software licensing, the Flexera 2025 State of the Cloud Report digs into how organizations are improving cloud spend efficiency, while tackling the complexities of emerging technologies ...