The 11th anniversary of the Apple App Store frames a momentous time period in how we interact with each other and the services upon which we have come to rely. Even so, we continue to have our in-app mobile experiences marred by poor performance and instability. Apple has done little to help, and other tools provide little to no visibility and benchmarks on which to prioritize our efforts outside of crashes.

11 years ago, Apple opened their App Store. While other stores existed, none was tied to what would become our primary medium of interacting with the world - the iPhone. Previously, all of us were using browsers to interact, which had its limitations, namely being tied to a stationary or bulky computer. With the advent of the App Store and the fact of now having a hardware device in our pockets, the opportunity for higher fidelity experiences emerged. First, games and social experiences released, similar to those on a browser. While not an innovative jump from browser-based games and social networks, people readily adopted them because these apps were what we were already using, but more importantly, now readily available to us at all times of the day. Then those experiences improved rapidly to take advantage of the capabilities of the phone itself. Games improved, social experiences improved, and the way we interacted with the world changed. Humans are drawn to the lowest friction experiences. Conversations became text and chat, marketing became push notifications and SMS, purchasing became instantaneous, and short form video clips became streaming augmented always-on experiences. Even food ordering, hailing a cab, and checking in at work changed because it was just so much easier to conduct on a phone and “seemingly” a better experience. The behavioral change of where we spent our time happened hyper fast, but the tools available to developers to build those streamlined experiences did not. Initially, the focus was on acquiring users and developing features. Stability was an afterthought. Fittingly, Apple released a single metric, crashes, and only limited capabilities to solve these crashes. Even today most developers don't know that Apple provides a crash reporting tool, and to be honest, those that do, dismiss it as unusable. Crashes became the de facto and only benchmark of app quality. If an app has 99% crash-free sessions, it's relatively good. Apple did push another benchmark, ratings, but user ratings are very easily gamed through prompts and most users with unstable or poor experiences walk without rating the app. We all have issues with apps. It's not uncommon to find someone swearing at their phone or attempting to restart it to solve a problem. (Reminds me a lot of conducting a silent prayer and restarting an old desktop to solve a problem.) To help developers adapt and improve all of our experiences, below are some of the new benchmarks that apps should follow to actually provide a stable experience. In fact, Apple has been rumored to use or will soon use many of these benchmarks to determine app rankings in the store, while Google has already started to do so. Please note that only monitoring tools track these benchmarks and less than 1% of apps use a dedicated monitoring tool — although adoption is growing rapidly.

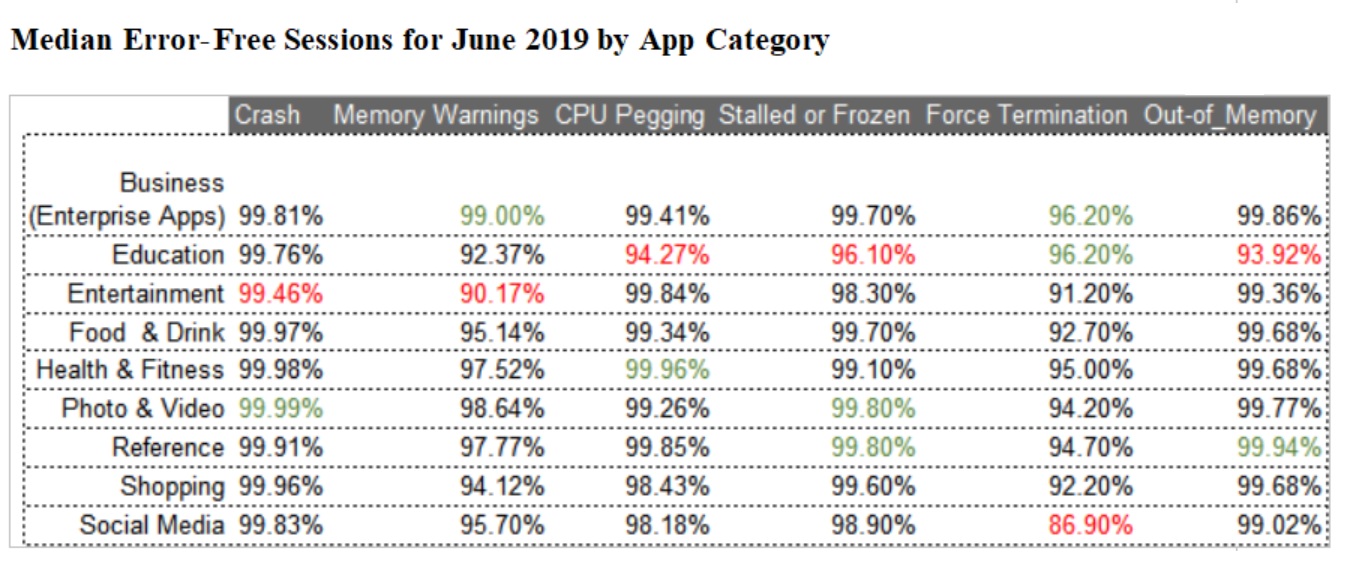

How to Read these Benchmarks: These benchmarks indicate the percentage of clean sessions for each app category by error type. The overall app health is based on a combination of all these metrics, not included here for simplicity. For example, Force Terminations are defined as when a user leaves an app and immediately terminates the app from memory while the app is still running. CPU Pegging is when the processor is fully maxed out and the app is unable to run. Key Findings: ■ Crash session scores across all verticals are over 99%, which is expected since it has been the primary focus of developers to date. ■ Social Media apps have the worst Force Termination score, which may indicate a larger issue that is going unreported, such as a broken registration or frozen startup. ■ Shopping apps also resulted in a poor Force Termination score, which could be attributed to users frustrated with a feature not working in the app, like a purchase flow failing or a frozen item page. ■ Entertainment and Education apps perform the worst across several categories; an indication of instability, complexity of app, and immaturity of app type. Now that we are on the 11th anniversary, it's time for us as app developers and teams to shift to the new benchmarks to provide our users with the experiences that they deserve, and Apple as a user-focused high-fidelity brand that users expect. Methodology: Embrace created a data set from top 100 mobile iOS apps. User experiences were measured based on actual impact to a user, including freezes and abrupt closes. The latter includes but is not limited to crashes. All data was collected for the time period between June 1 and 30, 2019.