The education sector is undergoing significant change. National enrollment for higher education has declined 3 percent across the United States since 2015, according to the 2017 National Student Clearinghouse Research Center annual study. Decreased student enrollment is affecting many Higher education institutions and resulting in increased competition, student demands for more cutting-edge facilities, and shrinking profit margins, even as course fees reach all-time highs.

In one effort to respond to decreased admissions, many colleges and universities are focusing on a student-centered experience. For example, in addition to traditional classrooms, distance learning and mobile device support are being introduced to attract more millennials and older students. According to the 2017 Education infographic by Livestream, 77 percent of colleges offer online courses and 55 percent of college presidents predict that by 2022, all students will take at least some of their classes online.

However, implementing this new digital technology is not enough. Colleges and universities also need to ensure appropriate network and application performance for their remote distance learning feeds. Since live stream and on-demand video feeds are critically important, performance issues cannot be tolerated. This is a prime concern for both universities and K-12 institutions.

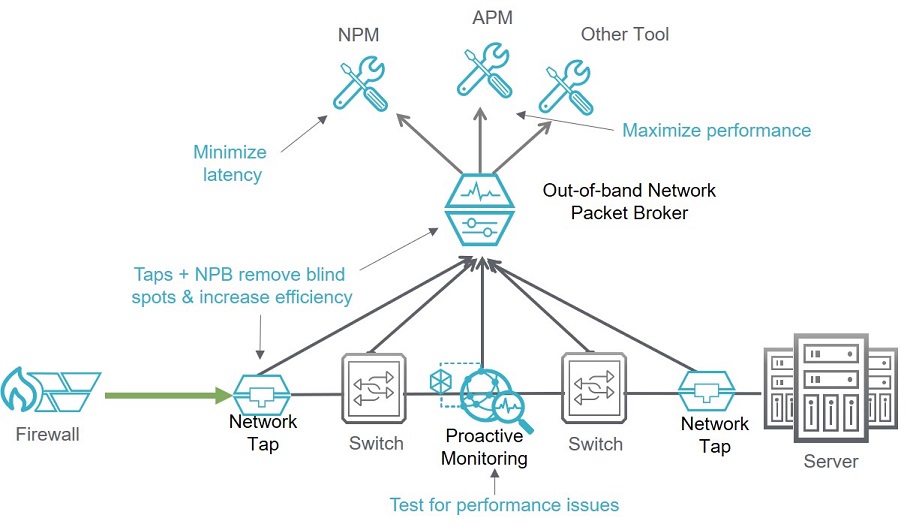

The key ingredient to optimum network and application performance is monitoring. You have to know what the network and its applications are, or are not, doing. Network visibility equipment, like taps and network packet brokers, can help.

Here is a list of clear visibility actions that can be implemented to address performance issues:

■ Remove on-premises blind spots – This is accomplished just by installing taps and network packet brokers (NPBs). These two components give you network performance monitoring (NPM) data access across your whole network.

■ Identify and document your network latency, on a per segment (as needed) basis – A proactive monitoring solution can be used to characterize your network. By actively testing between probes placed throughout your network, you can identify the latency, throughput, and quality of service across your whole network or just parts of it. This further allows you to understand performance issues and pinpoint where they occur.

■ Perform root cause analysis and debugging of performance issues – Once the taps and a packet brokers are installed, network data can be captured and sent to application performance monitoring (APM) tools for performance problem correlation and analysis to make sure that you maintain student quality of experience (QOE).

■ Remove cloud blind spots – Just as with the on-premises blind spot situation, cloud-based online education feeds can have issues as well. In this situation, a cloud visibility solution can be installed to collect packet-based data for those cloud apps and then the data can be passed on to an APM tool for analysis and QOE optimization.

■ Actively test network performance – When new applications are added to the network, this can affect network performance. A synthetic traffic generator allows you to respond to complaints about application or network slowness by actively testing different segments within your network to isolate and remediate problems faster. It can also be used to test software updates before they go live.

The diagram below illustrates how these technologies can be inserted into a generic education network.

The key point to remember is that acquiring the right monitoring data is the most important ingredient to creating and maintaining network and application performance.