The mass rush to work-from-home (WFH) that took place back in the spring was a shock to the system for many enterprise networks. But with summer closing out and the prospects of a near-term return to office-centric workflows looking increasingly slim, enterprise IT teams that haven't gotten comfortable with their "new normal" need to start making changes fast.

But getting familiar with network connections your team doesn't own or control can be tricky for enterprise IT who are accustomed to managing a primarily branch-office footprint. These teams are relying on connectivity beyond the traditional network edge to give their users access to network resources and the apps they need to stay productive.

Unlike commercial connectivity, these residential connections — aka the "last mile" links between residential workstations and the network edge — aren't backed by ISP SLAs that guarantee upload and download speeds. Instead, this access is delivered "best effort," meaning any number of factors could impact how much network capacity is actually delivered out to a residential work station.

To begin with, residential connections don't enjoy nearly the network speeds of the office, putting workers at a disadvantage where performance is involved before random variances in last-mile delivery come into play.

But what may be surprising is just how this variance plays out over a widely-distributed enterprise footprint.

To help get ahead of issues impacting WFH users, teams need to first understand what they're working with when it comes to the new stakeholders involved in connecting end users with corporate network resources. This includes gaining a true understanding of the performance of ISPs, for instance, responsible for that last mile connectivity at each home location, as well as ensuring that the amount of capacity delivered to an individual user's home is adequate for the job.

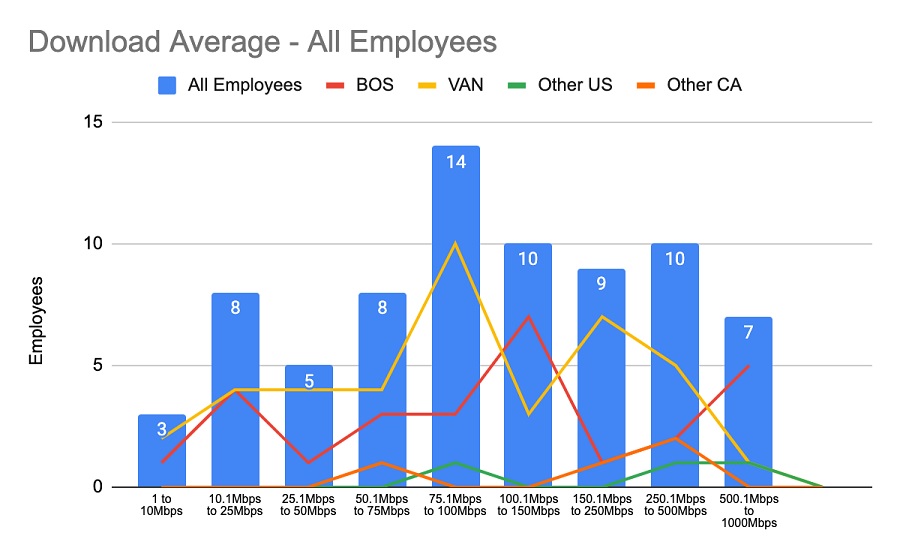

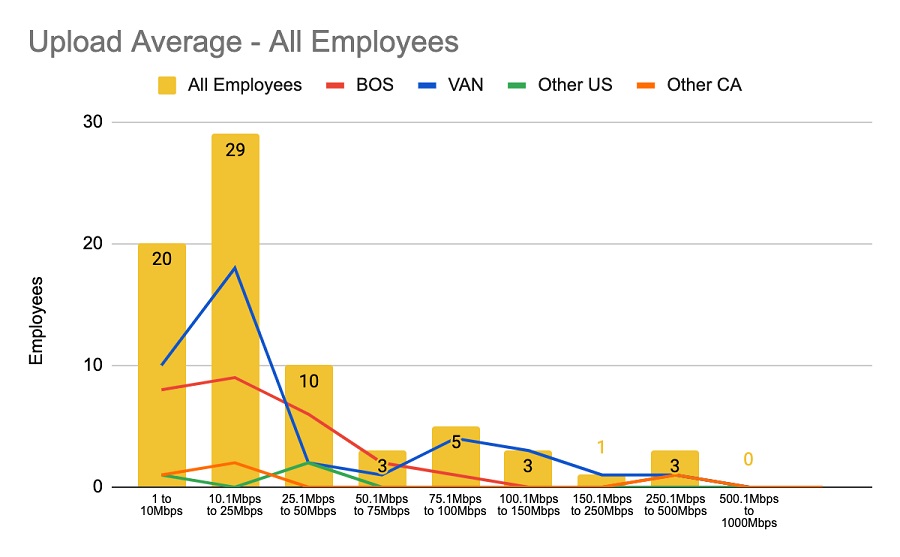

When we at AppNeta closed our Boston and Vancouver offices back in March, we conducted an initial survey of our own WFH network connections — a practice we recommend every systems team does to help gain a baseline of expectations for network performance out to critical team members. We found that not only are most users' residential ISP offerings far off the capacity levels they're used to experiencing in-office, they're not even getting the full download and upload speeds that they've contracted for.

Compounded with the stress on the user's home network from non-business apps used by others throughout the household, this misalignment between expected and delivered capacity can sink worker productivity across teams.

This last mile, potentially between the enterprise network edge and a user's residential workstation, but more likely with the residential ISP, is where the bulk of performance issues arise in the WFH era, as reported by our own network management team and our enterprise customers. And while IT teams may not own, manage, or really control those residential last miles, they can still gain visibility into how that connection is performing to help resolve issues before they ripple across departments.

Teams need to understand what these variances are going to be so that even if they can't regain those lost seconds of dwell time because of the limitations on that last-mile connectivity, they at least have the data to baseline end-user expectations and inform improvements (and expected results) going forward. That means not only getting to the root of the problem (and proving innocence) fast, but also having the data handy to seek out resolution with the appropriate stakeholders.