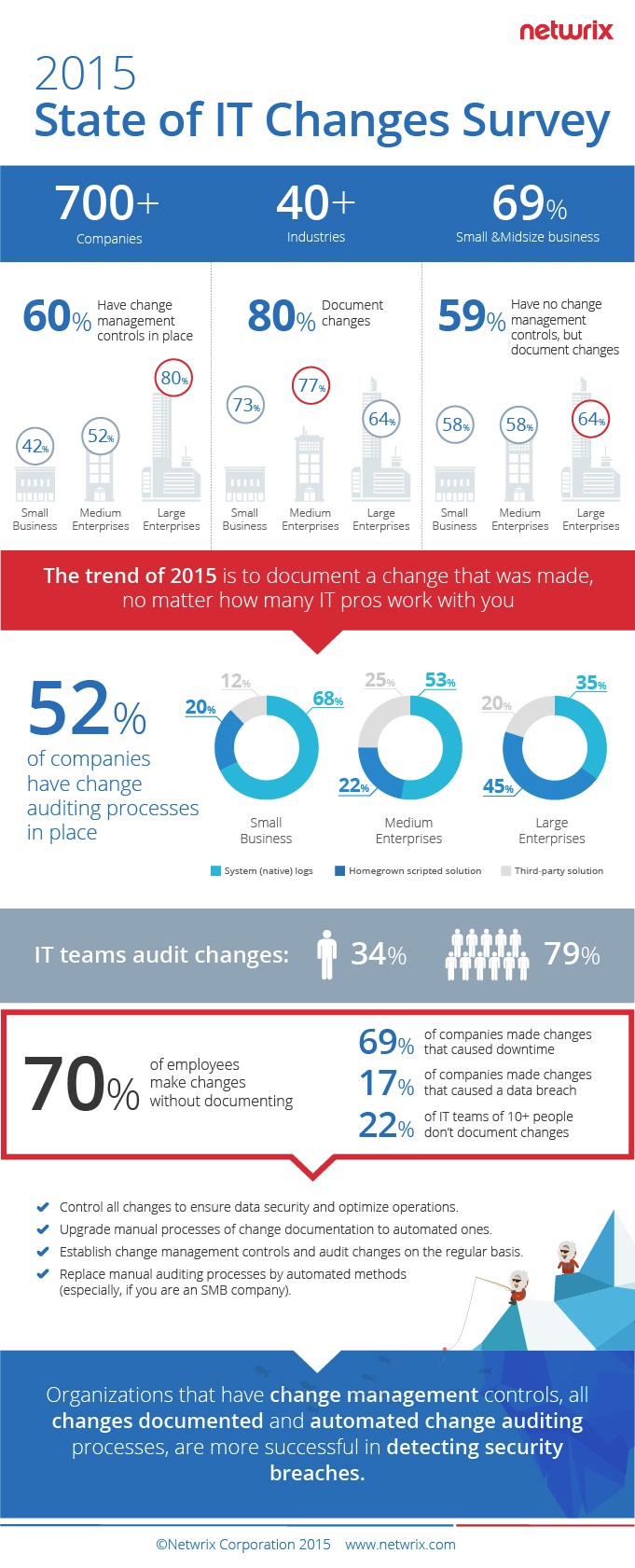

Almost three-fourths (70%) of companies forget about documenting changes, up from 57% last year, according to Netwrix Corporation's 2015 State of IT Changes Survey, covering 700 IT professionals across over 40 industries.

In addition, the number of large enterprises that make undocumented changes has increased by 20% to 66%.

Undocumented changes pose a hidden threat to business continuity and the integrity of sensitive data. The survey shows that 67% of companies suffer from service downtime due to unauthorized or incorrect changes to system configurations, while the worst offenders are again enterprises in 73% of cases.

The report states: "Incorrect or unauthorized changes to system configurations can impact sustainability of business processes and cause IT services to stop. The majority of IT pros admit that they still are not able to control sustainable performance of their IT systems and continue to make changes that were a root cause of system downtime; the share has even increased throughout the year."

Despite the fact that companies still have shortcomings in their change management policies, the overall results of 2015 show a positive trend. More organizations have changed their approach to changes and have made some effort to establish auditing processes to achieve visibility into their IT infrastructures.

Key survey findings:

■ 80% of organizations surveyed continue to claim they document changes; however, the number of companies that make undocumented changes has grown throughout the year and reached 70%. The frequency of those changes has also increased.

■ 58% of small companies surveyed have started to track changes despite the lack of change management controls, against 30% last year.

■ Change auditing technology continues to capture the market, as 52% of organizations surveyed have established change auditing controls, compared to 38% last year. Today, 75% of enterprises surveyed (52% in 2014) have established change auditing processes to monitor their IT infrastructures.

■ Organizations opt for several methods of change auditing at once. 60% of SMBs surveyed traditionally choose manual monitoring of native logs, whereas 65% of enterprises deploy automated auditing solutions.