This is the first in a three-part series taken from Chapters Three, Twelve, and Appendix B in CMDB Systems: Making Change Work in the Age of Cloud and Agile. It is not meant as a substitute in any way for the book, but should provide you with a good beginning point for thinking about the technology selection process.

This three-part series will address technology options focused on the following areas:

1. Core CMDB

2. Application discovery and dependency mapping (ADDM)

3. Other associated technologies such as relevant investments in automation, analytics, added options for visualization and reporting, and social IT

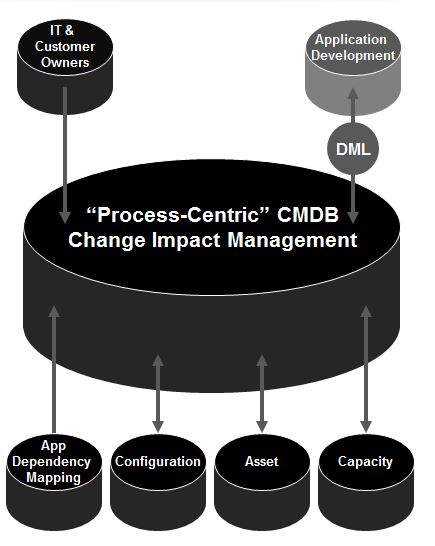

A core CMDB is part of a large system and cannot be understood as a single, isolated technology. Here, a core CMDB affiliates closely with CI owners, a definitive media library (DML) for integrated development processes, application dependency mapping, configuration automation, asset management and capacity management tools.

Core CMDB: Packaging

Very few CMDB solutions are currently packaged as standalone options. For instance, you may already have a CMDB embedded in your service desk that’s not yet in use. However, you may decide for any number of reasons that your current investment isn’t the one to take you the whole distance going forward. Moreover, there are a growing number of variations on a theme — as some CMDBs are packaged primarily as business service management (BSM) solutions optimized for service impact and performance, others target workflow and automation, and some CMDB solutions are extensions of application discovery and dependency mapping tools.

Core CMDB: Deployment and Administration

What types of administrative efficiencies are offered to support deployments, maintenance, and evolution of a CMDB/CMS system? These may include, but are not necessarily limited to:

■ Ease of initial CMDB/CMS population

■ Flexibility and ease of setting policies for discovery and reconciliation

■ Support for integrated analytics to support the reconciliation process

■ Ease of customizing and extending the reach of out-of-the-box models

■ Reports to support maintenance, scope, accuracy, and administration of the CMDB/CMS itself

■ Ease of entering domain expertise for CMDB updates

Needless to say, issues with CMDB deployment and administration have become notorious over the last decade. But there has been significant progress. Here are two quotes showing different perspectives on how that progress in deployment and administration is becoming a reality.

“We dumbed down the CMDB to make it more consumable. Now our customers are accustomed to defining their own applications inside the system. Actually one thing I’d like to see in the industry is dumbing down the CMDB so the average person can use it.”

“Administration and deployability are very good. We basically went from nothing to designing our data model and imported all of our current CIs and Assets, retired our asset DB, and had our CMDB up and running within six days!”

Core CMDB: Architecture and Integration

When considering architecture and integration you should ask the following:

■ Scalability: What is the highest number of CIs the solution is architected to support? What is the largest actual deployment? What is the largest number of users supported? What is the vendor’s definition of CI—i.e. how granular or not?

■ Range of discovery: Can the solution you’re evaluating, either natively or through third-party integrations, support discovery for network (layer 2 and/or 3), systems, applications, application components, third-party applications, Web and Web 2.0, storage, database, desktops, mobile devices, and virtualized environments? Can it discover configuration details for any or all of the above?

■ Application dependency: Does the solution support application discovery and dependency mapping, or dependency mapping in general, as either an automated and/or manual process?

■ Reconciliation and normalization: Does the solution you’re evaluating reconcile, normalize, and/or synchronize data from multiple (in-brand and third-party) sources? Can it support weightings for “trusted sources”? That is, can it prioritize one source over another for a certain CI?

■ Range of sources These sources might include inventory and discovery, other management data repositories (MDRs), text records, Excel files, service catalogs, service desks, performance management tools, security tools, asset management tools, and other configuration management tools. You should ask the vendor to address both those sources that are third-party options, as well as those within the vendor’s own portfolio.

Functional Concerns

The questions you should pursue for functional evaluation fall into four main categories: Modeling and Metadata, Analytics, Automation, and Visualization. The last three will be examined in Part Three of this blog series, since they often involve integrations with other products or capabilities.

■ Modeling and Metadata: How versatile and extensible is the vendor’s modeling to support various CI relationships, types, classes, sub-classes, and attributes. For instance, adjacency (or connected to) is useful for activating configuration automation, assessing the impacts of change, and performing triage, among other values. Monitored by is valuable in managing and optimizing the lifecycle of a CI as well as assessing the CI’s health. Owner of can be an individual or organization, if there is a fairly fixed relationship between a CI (device, service, etc.) and its human organizational owner. If the relationship is more fluid, CI ‘owners’ may be better mapped in terms of attributes instead of relationships. Most vendors can support more than 1000 CI attributes natively, while at the low end it may be less than 100.

■ Support for CI states: CI states are another critical area of interest. For instance, in managing change, it’s important to be able to contrast ‘Actual’ or ‘Discovered’ state with ‘Desired’ or ‘Approved’ state. Another consideration is trending and historical snapshots in time—with ‘historical’ or ‘past’ state support. Other CMDB solutions may also support ‘future’ or ‘planned’ states from approved production level conditions. This can be especially valuable for empowering DevOps requirements.

The following two quotes are particularly good examples of why solid CI state support can be a game changer:

"Our vendor supports the ability to generate and maintain snapshots of configuration in time, allowing us to better isolate and understand how application and infrastructure components affect each other. This streamlines troubleshooting and diagnostics."

"We maintain three CMDB instances: the production environment, a simulation environment, and a test environment. Our vendor’s configuration management database can accommodate all three instances."

This is just a partial list of questions and criteria — but should give you a quick sketch of how you might begin to frame your RFP.

Much more detail is provided in our book, including guidelines specifically for initiating an RFP. EMA consulting also offers guidance for planning CMDB System-related and other technology adoption requirements.

Dennis Drogseth is VP at Enterprise Management Associates (EMA).