Traditionally, Application Performance Management (APM) is usually associated with solutions that instrument application code. There are two fundamental limitations with such associations. If instrumenting the code is what APM is all about, then APM is applicable only to homegrown applications for which access to code is available.

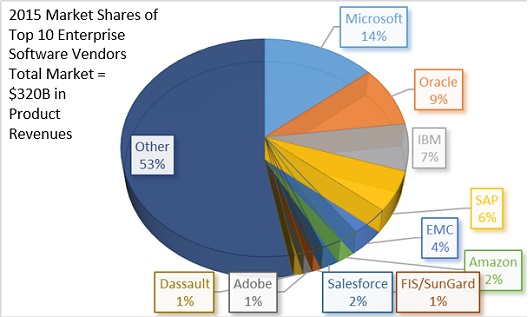

However, the majority of business critical applications are not homegrown. As the chart below shows, the $320B enterprise software market is driven by vendors who provide solutions for which there is no access to the source code. The enterprise software market in the chart covers a full assortment of commercially off-the-shelf products ranging from corporate databases to Enterprise Resource Planning (ERP) solutions and from Cloud-enabled productivity tools to mission-critical vertical applications. However, there are technical challenges with code instrumentation that are overlooked with this traditional association.

Source: Apps Run the World, 2016

Vendor Provided Software

Traditional APM vendors focus on application software that is developed in house, mainly based on Web Services. These solutions employ Byte Code Instrumentation (BCI), a technique for adding bytecode during "run time." These solutions are developer focused. If developers want to debug or profile the code during run time, BCI is an effective solution.

In reality, enterprises depend on both in-house developed software as well as vendor provided software. Applications that businesses use can be dived into two categories: 1) Business Critical Applications and 2) Productivity Applications. While business critical applications are the foundation on which the business success is dependent upon, productivity applications like email are also equally important for enterprises.

Generally, about 80% of the applications that enterprises use in either category are packaged applications supplied by vendors like Microsoft, SAP, Oracle, PeopleSoft and others. Only 20% of the applications are developed in-house. In the majority of cases, the in-house developed applications generally wrap around vendor provided software.

A common example is an application developed based on web services customized for a business that are supported on SAP in the background. Instrumenting vendor provided software is not possible as the source code is not provided by the vendor, therefore, code instrumentation techniques are not feasible for vendor provided software.

Instrumenting in-house developed application software at different points gives a rich view to optimize the application throughout development. However, there are several types of problems that such instrumentation just can't see. It does not, and cannot, always deliver the complete visibility that users think they're getting. In addition, code instrumentation is not "free", even with the expensive tools commercially available, it takes considerable coding skills (not widely available) to achieve effective code instrumentation without degrading the performance of the production code execution.

Technical Challenges

Code instrumentation can report on the performance of your application software stack, but the service offered to customers depends on far more than just the software – it depends on all of the networks, load balancers, servers, databases, external services like Active Directory, DNS etc., service providers and third parties you use to provide the service.

Traditional APM products do only BCI. They claim to be transaction management solutions, though there are limitations to what they can do in Java environments, and they have zero visibility of non-Java topologies.

A real transaction management product needs to follow the transaction between different types of application-related components such as proxies, Web servers, app servers (Java and non-Java), message brokers, queues, databases and so forth. In order to do that, visibility into different types of transaction-related data is required, some of which only exists at the actual payload of each request. Java is an interpreter and therefore hides parts of the actual code implementation from the Java layer. The Java Virtual Machine (JVM) itself is written in C, therefore there are operating system-specific pieces that are not accessible from Java and thus not accessible through BCI techniques.

If you want to use features of TCP/IP packets for tracing a transaction between two servers, the actual structure of packets is not accessible from the Java layer. There is information that is crucial to trace transactions across more than just Java hops. Such information is available only at a lower layer than the Java code, thus not accessible by BCI, which limits the ability to trace transactions in the real world.

Conclusion

For vendor provided software, BCI is an ineffective technique. For in-house built software, BCI allows programmers to enhance the code they are developing. It is a necessary tool for development teams but insufficient as it does not offer the visibility that IT Operations require in order to understand the application service delivery chain performance. If your business depends on mission-critical web or legacy applications, then monitoring how your end users interact with your applications is more important than how well the code is written. The responsiveness of the application determines the end user's experience. The true measurement of end-user experience is availability and response time of the application, end-to-end and hop-by-hop – covering the entire application service delivery chain.

Sri Chaganty is COO and CTO/Founder at AppEnsure.