Today 96 percent of organizations have Digital Transformation initiatives on their roadmap and more than half of those initiatives are in process now, according to an ESG report 2017 IT Spending Intentions Survey, conducted in March 2017. There is obviously a huge appetite across enterprises to utilize innovation for competitive advantage, and this places a huge burden on businesses to deliver access to services, data and apps, at any time and from anywhere 24.7.365.

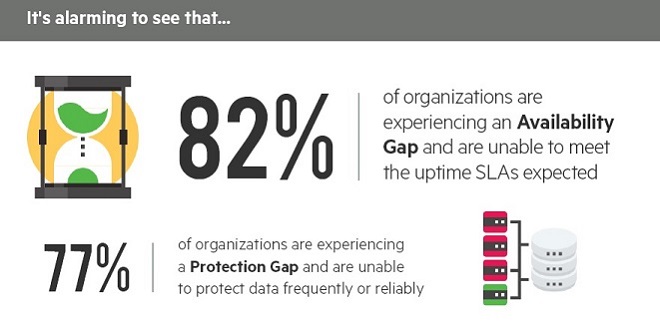

However, there is a major disconnect between user expectations and what IT can deliver, and it is hindering innovation, according to a new survey by Veeam. In fact, 82 percent of enterprises admit to suffering an "Availability Gap" — the gap between users demand for uninterrupted access to services and what businesses and IT can deliver — which is impacting the bottom line to the tune of $21.8 million per year, and almost two thirds of respondents admit that this is holding back innovation.

The 2017 Veeam Availability Report shows that 69 percent of global enterprises feel that Availability (continuous access to services) is a requirement for Digital Transformation; however, the majority of senior IT leaders (66 percent) feel these initiatives are being held back by unplanned downtime of services caused by cyber-attacks, infrastructure failures, network outages, and natural disasters (with server outages lasting an average of 85 minutes per incident). While many organizations are still “planning” or “just beginning” their transformational journeys, more than two thirds agree that these initiatives are critical or very important to their C-suite and lines of business.

Peter McKay, President and COO of Veeam Software said: “Today, immediacy is King and consumers have zero tolerance for downtime, be it of a business application or in their personal lives. Companies are laser-focused on delivering the best user experience and whether they realize it or not, at the heart of this is Availability. Anything less than 24.7.365 access to data and applications is unacceptable. However, our report states such ubiquitous access is merely a pipedream for many organizations, suggesting new questions need to be asked of transformation plans and a different conversation started about existing infrastructure. Enterprises are facing a major crisis from competitors that are able to offer this uptime and combine that with user experience.”

The Cost of an Outage: More Than Lost Revenue

The report also reveals the true impact of downtime on businesses. While downtime costs vary, the data shows that the average annual cost of downtime for each organization in this study amounts to $21.8 million, up from $16 million from last year’s report.

Downtime and data loss are also causing enterprises to face public scrutiny, in ways that cannot be measured by a balance sheet. This year’s study shows that almost half of enterprises see a loss of customer confidence, and 40 percent experienced damage to brand integrity, which affect both brand reputation and customer retention. Looking at internal implications, a third of respondents see diminished employee confidence and 28 percent have experienced a diversion of project resources to "clean up" the mess.

The Multi-Cloud Future

Unsurprisingly, cloud and its various consumption models are changing the way businesses approach data protection. The report shows that more and more companies are considering cloud as a viable springboard to their digital agenda, with software as a service investment expected to increase by over 50 percent in the next 12 months. Indeed, almost half of business leaders (43 percent) believe cloud providers can deliver better service levels for mission-critical data that their internal IT process. Investments in Backup-as-a-Service (BaaS) and Disaster Recovery as a Service (DRaaS) are expected to rise similarly as organizations combine them with cloud.

Protection Gap Creates Challenges

In addition, 77 percent of enterprises are seeing what Veeam has identified as a "Protection Gap" — an organization's tolerance for lost data being exceeded by IT’s inability to protect that data frequently enough — with their expectations for uptime consistently being unmet due to insufficient protection mechanisms and policies. Although companies state that they can only tolerate 72 minutes per year of data loss within "high priority" applications, Veeam’s findings show that respondents actually experience 127 minutes of data loss, a discrepancy of nearly one hour. This poses a major risk for all companies and impacts business success in many ways.

Jason Buffington, Principal Analyst for data protection at the Enterprise Strategy Group said: “The results of this survey show that most companies, even large, international enterprises, continue to struggle with fundamental backup/recovery capabilities, which along with affecting productivity and profitability are also hindering strategic initiatives like Digital Transformation. In considering the startling Availability and Protection gaps that are prevalent today, IT is failing to meet the needs of their business units, which should gravely concern IT leaders and those who answer to the Board.”

Methodology: Veeam commissioned Enterprise Strategy Group (ESG), a leading IT analyst, research, and strategy company, to develop and execute the survey for this report. ESG conducted a comprehensive online survey of 1,060 ITDMs from private and public sector organizations with a minimum of 1,000 employees, in 24 different countries, in late 2016. The countries surveyed include Australia, Belgium, Brazil, Canada, China, Denmark, Finland, France, Germany, Hong Kong, India, Israel, Italy, Japan, Mexico, Netherlands, Russia, Saudi Arabia, Singapore, Sweden, Thailand, the UAE, UK and the US.