Articles proclaiming the death of CMDB started appearing with regularity as early as 2010. Cloud was named as the likely killer. The problem with this bit of folk wisdom is that it isn't true.

Enterprise Management Associates (EMA) experience and field research consistently find that CMDB use not only continues but is on the rise. In a 2022 EMA initiative on the rise of ServiceOps, 400 global IT leaders stated that CMDB use was central to major functions. For many of those respondents, CMDB use was viewed as increasing in importance for automation of complex processes.

Where is the disconnect?

A search for in-depth research on the topic came up pretty much empty. Apparently, shinier topics and trends easily overshadow CMDB. As a result, CMDB's reputation and perceived value are largely a matter of myth and anecdotal guidance. EMA set out to right that wrong with independent research focused squarely on CMDB as it is used today and planned for the near future.

The Bottom Line from a Global Panel of IT Professionals

The CMDB remains fundamental to IT service quality — in many cases, critical — and its use is on the rise.

What best characterizes your view of CMDB in cloud times?

■ 50% CMDB is increasing: it is critical in multi-cloud and hybrid environments

■ 48% CMDB remains a fundamental contributor to IT service quality

■ 2% CMDB is declining in importance

If CMDB has the reputation of being the bad boy of IT, disappointing true believers with anemic returns on expectations, how can its use be on the rise globally?

The key is in the word "expectations."

1. Today, CMDB delivers value that directly maps to top IT initiatives, such as improved service quality and IT personnel productivity, as well as the ongoing drive to decrease unplanned work, outages, and costs.

2. It delivers that value using capabilities that weren't generally available when ITIL v2 birthed the notion of CMDB back in 2001. AI/ML, advanced automation, discovery and dependency mapping (DDM), the ability to handle diverse data sources on a massive scale, and mainstream AIOps all make CMDB objectives workable today in a way that just wasn't realistic 20 years ago.

Expectations ran high. Results ran low. The mismatch was bad news for the reputation of CMDB.

CMDB 2023 is not the same as CMDB 2001. The high-velocity world it lives in is vastly different, marked by changing combinations of multi-clouds alongside enduring on-premises applications and infrastructure.

The complexity, criticality, and dynamic nature of cloud actually increases the need for CMDB-like functionality. When microservices and container architectures or applications are deployed across multiple clouds in volatile combinations, capturing the configuration items (CIs) and their relationships becomes immensely more difficult and arguably more important than ever. IT needs a centralized way to track the sprawl of components in order to address security, threat assessment, compliance, cost management/cloud billing, and performance management complete with troubleshooting.

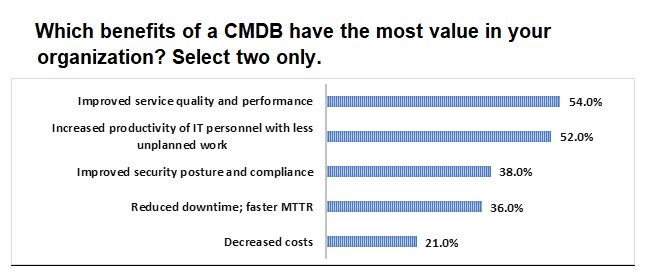

In fact, when asked to name the CMDB use that delivers the highest impact, "performance management" was the clear leader, followed by "security" and "compliance and risk." Asked to name the two most valuable of CMDB's many organizational benefits, the global panel reported a virtual tie between "improved service quality and performance" and "increased productivity of IT personnel with less unplanned work."

CMDB use is on the rise because it serves critical functions in a world where IT service crosses clouds, containers, mainframes, and microservices in a complex brew of technologies and change. Getting it right is not a simple or easy proposition, but neither are the challenges it addresses.

EMA discusses highlights from the research in an on-demand free webinar: CMDB today - myths, mistakes, and mastery.