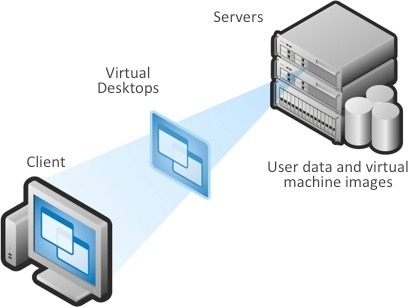

This blog talks about end-user expectations in terms of felt or experienced performance of applications or desktops delivered by technology which is called VDI, Desktop Virtualization, Remote Desktop, App Virtualization … you name it.

There are a lot of marketing names out there for a technology which basically separates the presentation layer (GUI) of an application from the processing logic. There are also a large number of different protocols and products on the market to achieve this split and build manageable, user-friendly environments.

At the end of the day the end-user gets either the GUI of an application or a complete desktop including the application GUI that are delivered via a remoting protocol. From an end-user performance point of view this application or desktop should perform equal to or better than what could be expected from a locally installed application or desktop.

One of the main reasons VDI implementations don't make it off the ground is that users don't like how virtual machines perform.

Performance Stumbling Blocks

Where are the stumbling blocks to delivering good perceived performance?

Login: When starting a remote desktop or a remote application, a complete login into the environment must be performed. For a desktop, it is generally accepted that a login takes 15 to 30 seconds. Since login is performed only once a day in most environments, login to desktops (if configured and optimized correctly) is never an issue.

The same login process (up to 30 seconds) becomes a problem if this time is needed to start up a single remote application because the user would expect the application to start instantaneously. Advanced VDI products include support for techniques called prelaunch, which perform a hidden login and therefore prelaunch a session context in which the remote application can then start as quickly as a local application.

Logoff: With the advent of the sleep and hibernate functions in modern operating systems, users are not used to performing logoff actions frequently. VDI, however, relies on freeing resources (because resources are shared) therefore logoff must be performed. The time it takes to logoff could be hidden from the end-user by disconnecting the screen first and then performing the logoff actions in the background. Most of the logoff duration is determined by the time needed to copy roaming profiles.

Roaming Profiles: A VDI environment is most likely built on some kind of roaming profile. Since the user’s session context is built on random least used resources, roaming profiles are key for a consistent user experience. Unfortunately, even modern software does not entirely support roaming (Microsoft OneDrive cache, for example, is stored locally in non-roaming %Appdata%\local). Since profiles must be copied in during login and copied (synced) out during logoff, a small profile and folder redirection is recommended.

Device Remoting: USB, pervasive use of resource-heavy webcams, and softphones make support of peripherals for virtual desktops a moving target.

Supporting peripherals is key to the virtual desktop user experience. Without access to their familiar printers, cameras, USB ports and other peripherals, users won't be as hot to accept desktop virtualization. As an administrator, you need to know which peripherals are out there and how to support them in a virtual desktop environment. And most of all you need to know what the end-user performance is with these devices once the VDI solution is in place.

Remote Access: If the VDI environment is accessed over a WAN connection, there are several parameters to consider. At first, everybody thinks about bandwidth. Limited bandwidth used to be the predominant source of network issues impacting user experience. But latency, or the connection’s quality (i.e. packet loss), are usually more crucial.

For today’s mobile worker the biggest issue is spectral interference. In a downtown office building, there are Wi-Fi networks on the floors above and below you and across the street. They are all creating constructive and destructive interference patterns that result in dead zones, high packet loss and degraded interactivity. End-user performance is heavily influenced by this packet loss, even when the end device shows strong network signal levels and sufficient bandwidth is available.

Latency on long distance connections (datacenter in US while the user is in China) adds another problem to the VDI user experience scenario. By definition there is a protocol in place which separates the presentation layer (GUI) from the processing layer. So, it is easily understood that when the user is on a WAN connection with high latency (> 150 ms) the user will notice delays while typing even in simple programs like editors or data entry masks of CRM tools. Latency caused by distance cannot be influenced but one can work on some of the symptoms which are inherited from TCP/IP protocol. Here WAN optimization products do help.

Why Does VDI Require Its Own Monitoring Tools?

You can't use your server monitoring tools for VDI performance monitoring. The goal of monitoring virtual desktops must be to assess the user experience, while with most monitoring tools on the market you're generally documenting resource usage. Plus, virtual desktop workloads change significantly more often than those of traditional PCs or servers, so you have to monitor them more closely and frequently. Look for a tool that monitors end-to-end (frontend to the backend servers) connectivity and offers metrics about the network, the physical machines and the virtual machines.

A monitoring solution designed with end-user transactional performance in mind provides IT Ops with application and virtual desktop performance monitoring that is correlated with user productivity. Armed with this data, IT Ops can rapidly investigate users’ complaints of poor app performance, determine other impacted users and the likely root causes. Then they can resolve the issue before workforce productivity is impacted. For that reason, IT organizations will increasingly be using performance metrics such as application and transaction response times. These end-user experience indexes allow IT to monitor the speed of applications and to evaluate the quality of the end-user experience.

Passive end-user application performance measuring systems and solutions supported by plenty of CPU resources, network bandwidth to spare, affordable storage space and Big Data analyses are able to provide a seamless end user desktop virtualization experience.

Reinhard Travnicek is Managing Director Technology at X-Tech.