Despite all of the advancement and maturity of observability systems, production issues take longer to resolve, according to the DevOps Pulse 2022 Report from Logz.io.

DevOps Pulse 2022 respondents consistently reported increasing complexity across their cloud environments — driven by everything from expanding microservices architecture to the proliferation and complexity of observability tools themselves — meaning they are struggling to maintain clear visibility, quickly resolve production issues and manage related monitoring costs.

64% of respondents reported an average MTTR of over an hour compared to 47% reported in the DevOps Pulse 2021.

What's more, 53.4% of respondents surveyed last year claimed to have resolved production issues within an hour on average — this year, that number dropped to 35.94%. This trend is being driven by factors ranging from growing cloud data volumes and systems complexity, to issues of observability tool sprawl, and the need for greater expertise among DevOps teams.

Additional key findings identified in the report include:

Observability costs and data volumes are growing concerns

27% of respondents ranked total cost of ownership and the large volumes of data being ingested into the tools among their main challenges in maintaining effective observability.

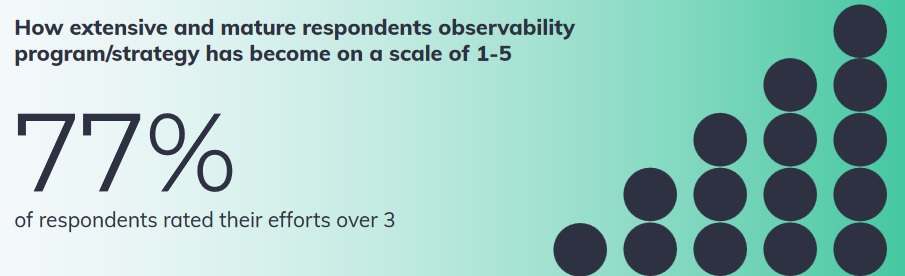

Observability is maturing

Some 77% of respondents rated their efforts over 3 on a scale of 1-5 when asked to indicate how extensive and mature their observability strategy has become — compared to last year, when more than 30% of respondents indicated a low score of two or less.

This is combined with a palpable increase in observability tool sprawl. 22% of those surveyed indicated their organization uses 5 or more observability tools, compared to 11% last year.

Open source capabilities are widely used

Open source capabilities are widely used by 90% of respondents. Open Source observability is the most common way to deploy observability.

A shared services model is growing in popularity

Over 85% of respondents indicated that their organizations operate using a shared services observability model in which a central team is responsible for implementing and maintaining tooling for other stakeholders such as app developers, SREs and DevOps teams.

Tools are further diversifying

This year’s survey responses highlighted the full range of observability tools in use by practitioners including log management and analysis (67%), and infrastructure monitoring (59%), followed somewhat behind by distributed tracing (27%) and APM (22%). Meanwhile some 21% said that they have deployed all of these capabilities

"Essentially, the 2022 DevOps Pulse Report reveals that there is too much data and the current model for observability is broken," says Tomer Levy, CEO of Logz.io. "As practices and implementations expand, organizations are becoming more concerned about the impact of data volumes on production quality and cost. In addition to offering an analysis of the evolving landscape, DevOps Pulse 2022 calls on organizations to think carefully about the impact of Kubernetes and Microservices and constantly evaluate telemetry data value and hygiene."

By closely tracking and analyzing data that is central to core observability requirements and finding ways to reduce MTTR despite identified challenges, organizations can better calculate associated spending and ROI. Emphasizing these factors combined with an increased focus on application and data security solve the challenges identified by DevOps teams and observability practitioners.