We live in a world where we expect instant gratification, especially when it comes to the quality of our internet experience. From the ability to have 24/7 access to our financial accounts, “one-click” shopping on eCommerce sites, and of course, searching for answers to the boundless array of questions we have on a daily basis.

However, as quickly as we can access this information right at our fingertips, there is a slight speed bump in doing so. Believe it or not – you aren’t being impatient. The Internet is getting slower and just about everyone is noticing.

So what’s part of the cause for this issue? Page bloat and unoptimized images.

Unoptimized Images Are Bogging Down the User Experience

People browsing the web expect a similar experience to a fast-paced HD TV channel, with intensive graphics, animations and other visual assets, and site designers have largely obliged.

However, there’s been a push-pull dynamic: people also expect websites to load as quickly as the changing of a channel, serving bright, high-resolution images in real-time.

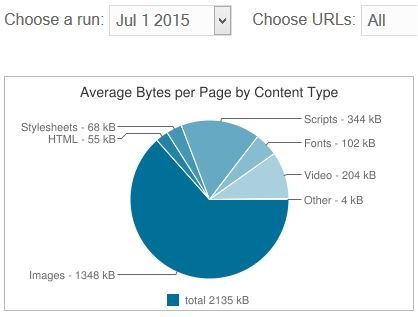

According to data from HTTP Archive, the average website is now 2.1 MB which is twice as large as the average website in 2012. Images, scripts and video make up most of that space.

Source: HTTP Archive

What isn’t helping the cause is the proliferation of smartphones, tablets and even watches that can access the Internet, which lead to incredible fragmentation.

All of these devices come with marketing campaigns promising portable powerhouses and break-neck speed, but don’t speak to the real-world bottlenecks in play, from browsers to bandwidth.

And the Solution Is…?

While coverage of the issues present the challenges adequately, they don’t address the steps that can be taken to address the problem.

If they did, you’d be reading about automation solutions. Using the right web performance optimization (WPO) solution enables faster websites and web-based applications, optimizes images on the fly, and selects the most effective image compression format that the browser can support.

It’s an elegant solution to a complex challenge, but can yield serious gains on Time to Interact (TTI), the measurement of the total load time between the first request and the point where the feature image loads and/or interactive elements can be engaged with. This is a key metric, and the real gauge for site speed.

So, when you are feeling a bit impatient that you’re website isn’t loading quickly enough, remember – it's not you. Web pages simply aren’t loading as quickly as they used to. Just know that an automation solution can address the spinning wheel of interminable loading.

Kent Alstad is VP of Acceleration at Radware.