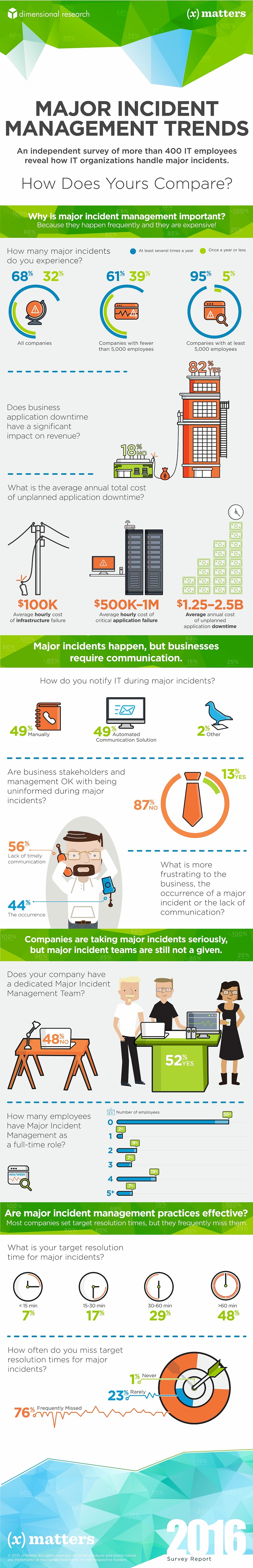

If your critical business applications go down, or even run below peak level, your business pays a tremendous price. When a major IT incident occurs, engaging the right people quickly to restore service and manage communications is crucial. No big news flash there. However I have to admit I was pretty alarmed when a new survey by Dimensional Research revealed an almost cavalier approach toward the handling of major IT incidents. Security and business incidents occur so regularly that we aren't even surprised anymore when they happen. They come in the form of data breaches, malware attacks, power outages, intermittent service availability and performance degradation to name a few. Click here to see infographic below In fact, according to the survey, 68 percent of companies surveyed experienced a major incident at least several times a year. For larger organizations with at least 5,000 employees, that figure rises to more than 90 percent.

The Consequences of Slow Response

Rapid, effective response can limit the damage. In a separate survey performed by Dimensional Research in April, 60 percent said finding and engaging the right person takes more than 15 minutes. But before 15 minutes have elapsed, almost half (45 percent) said the business has already started to suffer. And the suffering is real, according to the most recent survey. A large majority (82 percent) says application downtime affects revenue. According to a 2014 study by industry analyst firm IDC, the average cost of a critical application failure per hour is $500,000 to $1 million. Given how quickly, seriously and frequently a major incident affects businesses, why aren't they making critical investments in major incident management?

Money and Resources

First, a best-in-class intelligent communication platform is not cheap. So organizations that still view major incidents as unlikely events could be put off just by the cost. Another factor is resources. Barely half of companies in the new survey (52 percent) have a major incident team. Only 44 percent of those companies have team members who are dedicated solely to major incident management. Finally, maybe the word hasn't gotten out to all companies just how important rapid and effective major incident management is.

Is the Status Quo Working?

The effectiveness of current practices is not entirely clear because only 68 percent of companies even specify target times for resolving major incidents. But among those that are, the results are not good. More than three-quarters of respondents, 76 percent, miss their target times sometimes or often. Most companies in the survey (58 percent) have target times between 30-90 minutes. Remember the IDC figure of up to $1 million per hour of application downtime? Do the math.

So What Have We Learned?

Regardless of why more companies haven't created processes and implemented solutions for resolving major incidents, the current state of affairs is troubling. And this article has only touched on the financial implications of major incidents. Business also suffer from reputational damage, loss of customer loyalty and trust, and sanctions from regulatory bodies. Major incidents happen frequently, and every business should assume that sooner or later it will experience one. The ability to quickly, efficiently and effectively respond could save the business, its shareholders, its customers and partners. Are you prepared?