The challenging reality for most IT departments is that new software for integrating processes and thus improving productivity can turn out to be the source of additional IT headaches. This is at odds with what should be an organizational priority: making the IT department's life easier. Such an approach is wise not just to keep critical computing systems purring but to avoid disgruntlement and costly turnover within this important corporate group.

But when the word comes down to IT from above that new enterprise software will be installed, life usually doesn't get easier. This is particularly true in the case of monitoring tools for an Enterprise Service Bus (ESB) like Microsoft's BizTalk. Widely used for managing disparate business processes handled by different software systems, it's obvious that ESBs need monitoring to prevent costly downtime. However, monitoring tools add yet another layer of complexity, which means more work for a typically burdened IT group.

What's typical with monitoring software is the need to hire external consultants for installation and tuning. There goes some of a department's scarce budget! Then there's the real tricky part — determining, after the consultants leave, what was implemented and what techniques worked best. "Plug and play" is usually a pipe dream for something as complex as ESB monitoring tools. Figuring out the baseline business traffic thresholds and traffic patterns to set up monitoring is very tough.

The typical route with monitoring tools is manually configuring, adjusting and re-adjusting thresholds and monitoring parameters, likely with very little input from the business side of an enterprise. It's often a shot in the dark that ends up delivering too many or too few alerts.

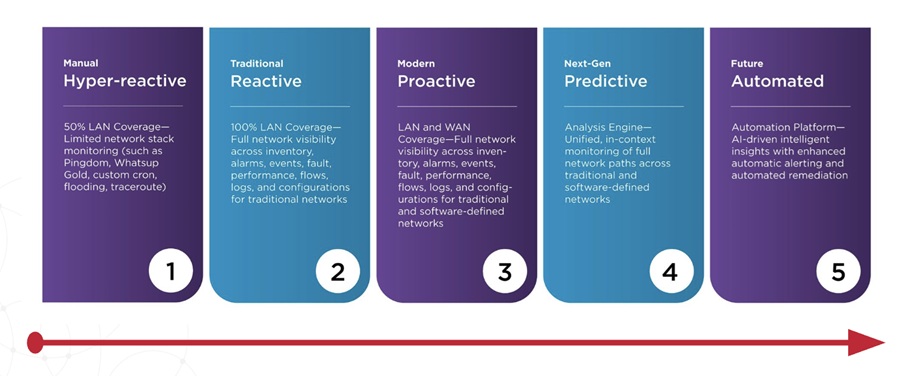

With this blindfolded approach, the daily reality for IT is having to investigate thousands of alerts , most of which they don’t believe are real and they just end up deleting— or perhaps they delete a few that are real. In essence, monitoring tools become reactive, sending warnings when the system has already broken down.

Unfortunately, there are other pesky issues to consider. Many ESB monitoring platforms are often extremely rigid and time consuming when it came to maintenance. Need someone on site to install and, in addition, these platforms are frequently bundled with other products and thus perform more than just monitoring, forcing IT personnel to have extra certification and training.

The biggest problem, however, is the specter of potential server downtime — the scariest issue of all. One recent study of U.S. data centers determined that the average cost of downtime was $5,600 per minute, with the average reported duration being 90 minutes. Even if an enterprise system is up 99.5% of the time, this still translates to almost 44 hours a year of downtime. This is the ultimate nightmare for IT.

With all these challenges in mind, it's welcome news that some IT teams have been able to weed through all the options and zero in on tools that avoid some of the common pitfalls. When the IT department of Aon Norway started reviewing monitoring tools, its goal was software that was as intuitive as the iPhone. Part of the world's largest insurance brokerage, Aon plc, this IT team had the same challenges as most IT groups but achieved a positive outcome.

Ivar Sagemo is CEO of AIMS Innovation.

Related Links:

Ivar Sagemo, CEO of AIMS Innovation, Joins the APMdigest Vendor Forum