IT teams need modern technologies to identify valuable data insights from the oceans of data businesses collect today, according to a new report. The report found that 71% of the 1,303 chief information officers (CIOs) and other IT decision makers surveyed say the colossal amount of data generated by cloud-native technology stacks is now beyond human ability to manage.

To keep pace with all the data from complex cloud-native architectures, organizations need more sophisticated solutions to power operations and security, said those surveyed.

Core to these new technologies is automation supported by AISecOps (a methodology that brings artificial intelligence to operations and security tasks/teams). The findings of the survey, conducted by Coleman Parkes and commissioned by Dynatrace, were published in the 2022 Global CIO Report.

Without a more automated approach to IT operations, 59% of CIOs surveyed say their teams could soon become overloaded by the increasing complexity of their technology stack. Perhaps more concerning is that 93% of CIOs say AIOps (or AI for IT operations) and automation are increasingly vital to helping ease the shortage of skilled IT, development, and security professionals and reducing the risk of teams becoming burned out by the complexity of modern cloud and development environments.

Glut of Data and the Lack of Effective Tools Creates Numerous Problems

The era of big data has created scores of opportunities, but it also has posed nearly as many challenges. The hyperconnected environments created using multicloud strategies, Kubernetes and serverless architectures enable organizations to accelerate the building of customized and innovative new architectures. These new environments, however, have become increasingly distributed and complex.

Meanwhile, IT and application managers must cobble together a host of legacy technologies to monitor and maintain visibility into performance and availability. The survey found that CIOs say their teams use an average of 10 monitoring tools across their technology stacks, though they have observability of just 9% of their environment.

This ad hoc approach makes it more difficult to deliver the best-performing and most secure software applications. The reasons are simple: each separate tool requires a different skill set to interpret the data's meaning and each organizes and visualizes metrics in different ways. Also, each tool provides visibility into just one layer of the stack, which creates data silos.

More Complex Environments Are Costly, Take Toll on Workers

When it comes to cost, 45% of CIOs say it's too expensive to manage the large volume of observability and security data using existing analytics solutions. As a result, respondents say they keep only what is most critical.

The mounting complexity and hassle involved in maintaining operations also takes a toll on employees; 64% of CIOs say it has become harder to attract and retain enough skilled IT operations and DevOps professionals.

Another problem is that log analytics — traditionally the source from which to unlock insights from data and optimize software performance and security — too often can't scale to address the torrent of observability and security data generated by today's technology stacks.

Automation and AISecOps is the Answer

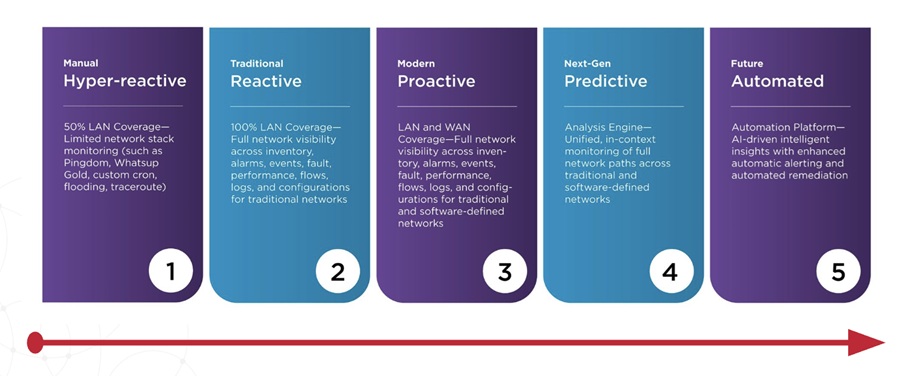

IT teams need a more automated approach to operations and security, combined with AISecOps. Achieving this effectively requires an end-to-end observability and application security platform with the ability to capture data in context and provide AI-powered, advanced analytics with high performance, cost-effectiveness and limitless scale.

Additionally, data warehouses have also become outdated. Models that employ a strategy with a data lakehouse at the core, one that features powerful processing capabilities, will drive greater innovation and efficiency. This kind of strategy harnesses petabytes of data at the speed needed to turn raw information into precise and actionable answers that drive AISecOps automation.

Another benefit of this model is the freeing of skilled DevOps teams from arduous, routine manual tasks, enabling them to work on more strategic, innovation-driving projects.

According to the survey, CIOs estimate that their teams spend 40% of their time just "keeping the lights on," and that these valuable hours could be saved through automation.

Organizations suffering from these issues should seek out an all-in-one platform that provides observability, application security and AIOps. With this strategy, leaders can provide their teams with an easy-to-use, automated, and unified approach that delivers precise answers and exceptional digital experiences at scale.