As an IT professional, I'm used to words that mean different things to different people. For example, "log monitoring" could mean anything from simple text files to logfile aggregation systems. "Uptime" is also notoriously hard to nail down. Heck, even the word "monitoring" itself can be obscure.

To illustrate this phenomenon, I often bring up the (completely unrelated) classical Chinese poem Lion-Eating Poet in the Stone Den. Spoken out loud, every word is a version of the sound "shi." But as you can see, aside from the pronunciation, each word has extremely different meanings.

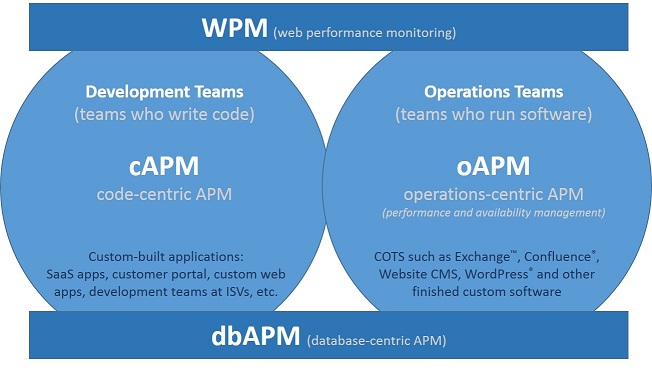

This is why I'm not surprised that application performance monitoring (APM) can mean so many different things depending on the context. But what is most confounding is that these usages are not mutually exclusive. There is overlap. This graphic demonstrates:

As you can see, there's code-centric APM (cAPM) where the focus is on code execution, transactions moving through the message queue, transforms, etc. This type of APM is often applied to custom developed code, or applications that are highly transactional in nature.

At the other end of the spectrum, there's operations-centric APM (oAPM). This type of APM is more concerned with what's often called "shrink-wrapped" software, which can be everything from single-purpose business utilities to enterprise class tools, such as Microsoft Exchange and even foundational things like the operating system itself. The point isn't that they are any less sophisticated than the programs that use code-centric APM, but the needs are different. More on this point in a moment.

There's also web-centric APM, or web performance monitoring (WPM), which, as the name implies, is focused on monitoring web applications. So it's less about the code execution or the stability of the underlying server application, and more about how the user of the web application is experiencing the service.

Finally, there's database-centric APM (dbAPM). In this iteration, it's all about the things that make your database go bump in the night: long running queries, locking, blocking, and wait states.

If you look at it closely, you can see the overlap. cAPM still cares that the application itself is healthy, and it can provide insight into things like services and processes, performance counters, and log messages. But that's not the primary focus. Similarly, oAPM has the ability to expose issues with transactions, but not to the level that cAPM does. Where it shines, however, is in operational metrics. And the same is true for WPM and dbAPM.

This has all always been true, but it wasn't as clear until recently. The emergence (and convergence) of cloud, DevOps, hybrid IT, and everything-as-a-service (EaaS) has highlighted both the overlap and the differences.

This is why I recently predicted that, "2017 will be the year of 'not just' in APM. As in 'not just agent-based transaction tracking' or 'not just for DevOps.' But most importantly, 'not just for home-grown code.' In the coming year, APM will fully embrace the words behind the acronym to include tools and techniques that allow management of all application types — from those developed in-house to customized-off-the-shelf ones, to pure shrink-wrap apps that enterprises purchase, install, and run as-is. Yes! Some of those really do still exist."

I'm looking forward to the time — in this coming year, if my prediction holds true — when IT professionals can say, "APM" and understand the nuances the same way students of Chinese literature understand that "shí shì shī shì shī shì" means, "A poet named Shi lived in a stone room."