OpenTelemetry is quickly becoming a foundational element of observability, according to a new report I wrote in partnership with Dan Twing, President and COO of Enterprise Management Associates (EMA), titled Taking Observability to the Next Level: OpenTelemetry's Emerging Role in IT Performance and Reliability. The report was sponsored by Elastic, an APMdigest sponsor, as well as Apica, Beta Systems, Dynatrace, Embrace and SolarWinds.

OpenTelemetry (OTel) is an open source CNCF project offering a framework and suite of tools including APIs and SDKs that facilitate the generation, collection, and exporting of telemetry data for observability platforms and related tools. OTel collects logs, metrics and traces, and is expanding data types to include profiling and many other possibilities.

This report comes at just the right time, with OpenTelemetry emerging as an essential component of modern observability. Our first objective for the research was to assess the awareness and perception of OpenTelemetry in the IT industry. We assumed the research would show that the project has some good momentum, but the results were even a bit higher than expected, with a majority (68.3%) of respondents saying they are moderately or very familiar with OTel.

OpenTelemetry also enjoys a positive perception, with half of respondents considering OpenTelemetry mature enough for implementation today, and another 31% considering it moderately mature and useful. So more than 80% basically feel that OpenTelemetry can be used now. And almost everyone surveyed (98.7%) expresses support for where OpenTelemetry is heading — a very strong vote of confidence. BTW those last two groupings include respondents that are only marginally familiar with OpenTelemetry, which suggests that OTel has a rock solid reputation.

The majority also say OpenTelemetry's role in observability is important — 61% believe OpenTelemetry is a very important or critical enabler of observability, and 57% place a similar value on the importance of OpenTelemetry to their own observability strategy.

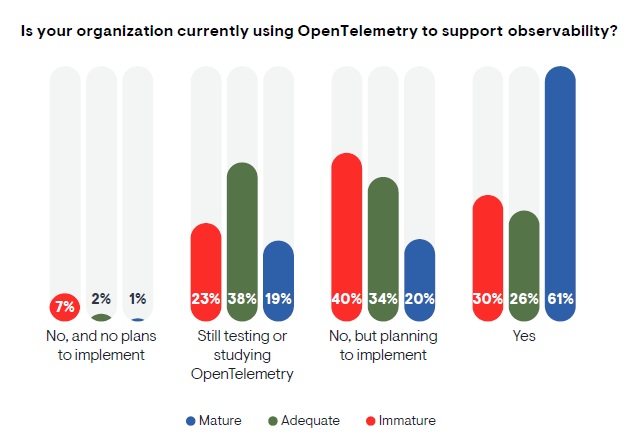

The usage numbers are also encouraging. The report states, "Almost half (48.5%) of respondents currently use OpenTelemetry. Another 25.3% are not using OpenTelemetry yet, but are planning to implement. This means that just under 75% are either using or planning to use OpenTelemetry, a statistic that bodes well for the future of the standard. The remaining 24.8% are still evaluating, while only 1.5% of respondents had no plans to implement."

The survey findings further reflect the momentum of OpenTelemetry by showing how observability maturity correlates directly with the awareness, perception and even adoption of OpenTelemetry. A majority (64%) of survey respondents assess their own observability practices as mature or very mature, and 45% of that group are very familiar with OpenTelemetry; 67% see OpenTelemetry as very important or critical to their own observability strategy; and 61% already use OpenTelemetry.

The EMA report holds much more interesting stats about OpenTelemetry that can be valuable to both observability practitioners and IT product vendors, answering questions such as:

- Where are users deploying OpenTelemetry?

- What are the concerns and challenges?

- What are the benefits of OpenTelemetry?

- What level of ROI are users gaining?

- What are the expectations for OpenTelemetry's future?

One of the final points we made in the report: OpenTelemetry will become a competitive advantage for organizations across most industries. "One of the most consequential points to consider: the survey findings suggest that your competitors have already started using OpenTelemetry to improve digital performance, availability, and the user experience. With this in mind, if you have not already adopted OpenTelemetry, the time to start is now."