Earlier this year, New Relic conducted a study on observability. The company surveyed 1,700 IT practitioners and decision-makers across 15 countries in North America, Europe and the Asia Pacific region to to understand the current state of the practice and the external forces influencing spending and adoption. The 2023 Observability Forecast reveals observability's impact on the lives of technical professionals and businesses' bottom lines.

Here are 10 key takeaways from the forecast:

1. Observability delivers 2x annual ROI

Respondents to the survey receive a median $2 of return per $1 of investment in observability, with 41% receiving more than $1 million total annual value. Almost all (96%) respondents expected a significant negative business outcome without observability — noting higher operation costs and revenue loss from downtime as the concrete financial impacts of not having observability.

2. Outages are expensive - observability is critical

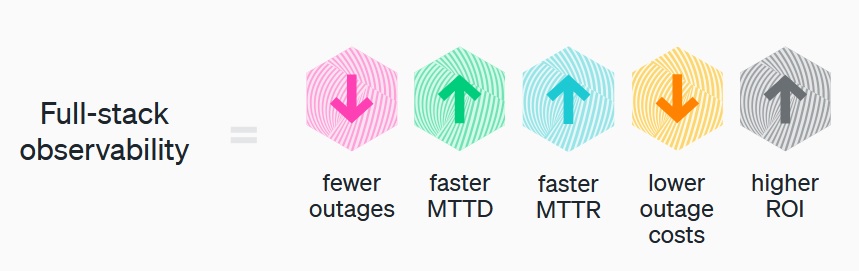

32% of respondents said critical business app outages cost more than $500K per hour of downtime. Although respondents report a median annual outage cost of $7.75 million, those with full-stack observability experience a median outage cost 37% lower than those without full-stack observability.

3. Observability improves service-level metrics

Respondents with full-stack observability are more likely to experience the fastest mean time to resolution (MTTR) and were 19% more likely to resolve high-business-impact outages in 30 minutes or less compared to those without full-stack observability.

4. Observability adoption accelerating

While most organizations still don't monitor their full tech stack, this is changing. Full-stack observability increased 58% YoY. By mid-2026, at least 82% of respondents expected to deploy each of the 17 different observability capabilities.

5. Organizations want tool consolidation

Tool sprawl remains an obstacle for organizations of all sizes despite a 2-to-1 preference for a single, consolidated platform. However, the proportion using a single tool more than doubled year-over-year, and the average number of tools deployed has gone down by almost one tool.

6. Organizations still haven't fully unified telemetry data

Siloed and fragmented data make for a painful user experience. Among the 40% of respondents who had more siloed data, 68% indicated that they strongly prefer a single, consolidated platform. Respondents with more unified telemetry data were more likely to have fewer high-business-impact outages, a faster MTTD, and a faster MTTR than those with more siloed telemetry data.

7. Security is driving observability adoption

Modern applications typically run in the cloud and depend on hundreds of components, each introducing additional monitoring challenges and security risks. Nearly half (49%) said an increased focus on security, governance, risk, and compliance was driving the need for observability, making it the top choice two years in a row. The security focus reflects the rise of cybersecurity threats and complex cloud-native application architectures.

8. AI and apps are also important to organizations

About two in five (38%) said the integration of business apps into workflows and the adoption of artificial intelligence (AI) technologies was driving the need for observability. The focus on AI and business apps like enterprise resource planning (ERP) and customer relationship management (CRM) makes sense as organizations are competing to attract and retain customers by providing the best customer experience.

9. Clear business benefits of observability

Almost half (46%) of practitioners said observability increases their productivity so they can find and resolve issues faster. About a third (35%) of IT decision-makers said it helps them achieve technical key performance indicators (KPIs) and/or business KPIs (31%). Of the total respondents, two out of five (40%) said improved system uptime and reliability is a primary benefit — 13% more than last year — while 38% cited increased operational efficiency and 34% focused on security vulnerability management.

10. The increase in observability deployment is expected to continue

Most respondents (83%) expected to deploy at least one new capability in the next year, with more than half (51%) of respondents expected to deploy one to five, and nearly a third (32%) expected to deploy six or more. For 2024, at least 90% of organizations expect to deploy capabilities like network monitoring, database monitoring, security monitoring, and alerts. These findings indicate observability's strong growth potential in the near future.