Tech-Tonics Advisors introduced the PADS (Performance Analytics Decision Support) Framework two years ago as a higher-level strategic approach for organizations to ensure user experience and application performance. Our premise was that traditional performance monitoring categories had become outdated, and that myriad tools were exacerbating complexity and frustration with incompatible consoles that were generating too many false positives.

The twin missions of the PADS Framework are to:

1. facilitate communication and collaboration among IT and business teams to proactively anticipate, identify and resolve application performance and user experience problems across the entire application delivery chain.

2. enable IT to orchestrate and manage internally and externally sourced services efficiently to improve decision-making and business outcomes.

A study we conducted in the fall of 2014 revealed that among S&P 500 companies, those that take a unified approach to gain better visibility into user experience outperform their peer group in revenue growth, profitability and market valuation. We also found that companies with a unified approach deploy a fewer number of tools. This suggests that a higher level of efficiency with IT Ops data can drive sustained competitive advantage at lower total cost of ownership (TCO).

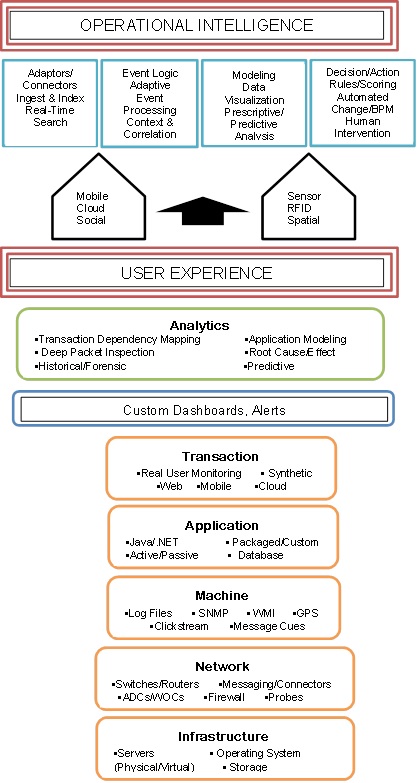

This data efficiency stems from better ops data integration. As the application delivery chain has extended to the cloud, specialized tools have emerged to monitor cloud-based and mobile apps. Since no one monitoring platform exists that does it all, the idea behind the PADS Framework is to holistically integrate real-time streams of machine data and other types of big data from newer tools with IT ops data from traditional monitoring solutions to provide physical and logical knowledge of the computing environment.

The Key is Data Integration

It’s one thing to reduce the number of tools the team uses; it’s much more of a commitment to integrate the data these tools generate. Data integration is labor intensive and time consuming. IT teams get bogged down trying to integrate data from different tools. They end up spending more time writing scripts preparing data for analysis than gaining real-time insights into user experience and application performance.

Extended mean time to repair/resolution (MTTR) undermines employee engagement and customer satisfaction and loyalty. The cost of downtime for a business critical application can be upwards of $1 million per hour, depending on the industry. It also exposes the company to cyberattacks. The fallout of poor performance can cost the company customers, fines for non-compliance and reputational damage that can take much longer to restore.

Modern integration tools automate much of the cleansing, matching, error handling and performance monitoring that IT Ops teams often struggle with manually. Application governance allows teams to implement a standardized approach to integrating diverse data sets, including those from SaaS applications and IaaS or PaaS clouds.

Application governance can be viewed as the sister of data governance. Data governance is based on bringing IT and business teams together to define common data definitions and data management best practices. The goal is to ensure that users have access to the highest quality data at the point of decision to optimize business outcomes.

Application governance does the same thing for the apps that drive the data. In concert with application governance, the PADS Framework also promotes DevOps best practices by breaking down IT data silos to promote better communication and collaboration while consolidating multiple functions often performed separately.

Source: Tech-Tonics Advisors

Conclusion

As the pace of business accelerates, companies are under increasing pressure to ensure that the right users have access to the right data in the right format – at the point of decision. This has become an acute need with more companies incorporate cloud, mobile and social into computing architectures, business plans and processes.

But companies cannot leverage modern applications if user experience is sub-par. In today’s software-defined economy, it’s no longer adequate for apps to work; they’ve now got to work to user expectations. Regardless of whether all indicators show IT that the app is performing to expectation, or key performance indicators (KPIs), the end user is the final arbiter of application performance. If the user experience is unsatisfactory, business will suffer.

As part of taking a strategic approach to user experience and application performance, IT teams also need to treat data integration more strategically. This is vital to leveraging monitoring tools, particularly as the definition of “user experience” expands in the age of the Internet of Things (IoT) machine-to-machine communications.

The PADS Framework can help IT teams think about how to integrate IT ops data to gain essential insights into user experience. Through a unified approach to performance analytics, IT can help their companies leverage technology investments to discover, interpret and respond to the myriad events that impact their operations, security, compliance and competitiveness.

A more operationally efficient IT team enables businesses to act on intelligence gained from unified performance analytics and operational intelligence. Understanding key fundamental business drivers and working in concert with application owners – and each other – IT teams can meet end-user performance expectations to facilitate strategic initiatives and positively impact financial results.