As Ferris Bueller once said, "Life moves pretty fast." It is especially true for those of us working in IT.

Microsoft releases two major Windows 10 updates per year, and in addition 12 monthly updates for their operating system. Change is unrelenting and so are the potential dangers that accompany it.

Despite Microsoft being one of the largest, most trusted software vendors in the world, I could very likely find news articles pointing out failures with each new release over the last few years. These bugs can mean the applications you need to do your job don't function properly, or worst, don't work at all.

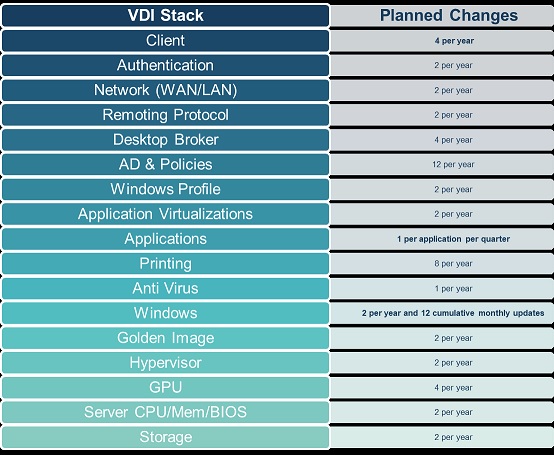

Of course, Microsoft is only one small part of the overall stack of your hardware and software and each element can require frequent changes that can impact you as an end-user. The fact is changes happen all the time in the overall VDI stack.

What Does Change Mean?

When you consider that the average end-user interacts with at least 8 applications, then think about how important those applications are in the overall success of the business and how often the interface between the application and the hardware needs to be updated, it's a potential minefield for business operations. Any single update could explode in your face at any time.

Given the ever-accelerating pace of IT change, how can businesses cope?

Safe Not Sorry

As lockdown restrictions ease, I'll be off on some campervan adventures around Scotland. I want to be able to cook safely and have off-grid heating (it is Scotland after all), which means using gas. Now, as you'll know, installing gas heating in a small confined space comes with the risk of Carbon Monoxide poisoning, and to be honest, my cooking often comes with the risk of fire! So, as I'm aware of the danger, I'm putting in smoke and CO detectors, plus installing a fire blanket and a fire extinguisher. I value my van and, more importantly, my life.

Likewise, any company managing an ever-changing software stack needs to consider the risks associated with putting blind trust into the hands of software vendors like Microsoft. My advice would be to de-risk frequent IT changes with a robust application testing strategy.

Start with automating the process of testing your applications and see if there are any problems with them after making updates to the hardware and software platforms they reside on. Preferably use a testing solution where synthetic users test all the typical activities that real users need to perform in your application. You then should be able to answer the following questions after each change to your environment:

■ Does the software work?

■ How long does it take to do each business-critical task?

■ Is the application response time within an acceptable range?

But what if you have fancy and custom-built applications? Look for a solution that can help you design custom scripts to test your custom applications.