As websites continue to advance, the underlying protocols that they run on top of must change in order to meet the demands of user expected page load times. This blog is the first in a 5-part series on APMdigest where I will discuss web application performance and how new protocols like SPDY, HTTP/2, and QUIC will hopefully improve it so we can have happy website users.

Start with Web Performance 101: The Bandwidth Myth

Start with Web Performance 101: 4 Recommendations to Improve Web Performance

So How Are We Doing?

In my last blog, I talked about all the different recommendations I've provided or come across over the years.

How are we doing with that? Are website owners out there listening?

Well, I decided to take a look at the archive — HTTP Archive, that is.

With HTTP Archive, I can look at some worldwide statistics on thousands of websites it monitors.

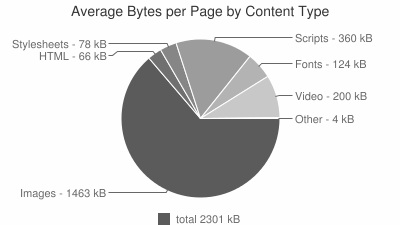

Let's look at bytes being sent to the browser.

As we can see, the average total byte size of a web page is a little over 2.3MB. And look at the biggest percentage in type of files: images account for about 63% of worldwide page sizes. Those file sizes can be reduced or minimized.

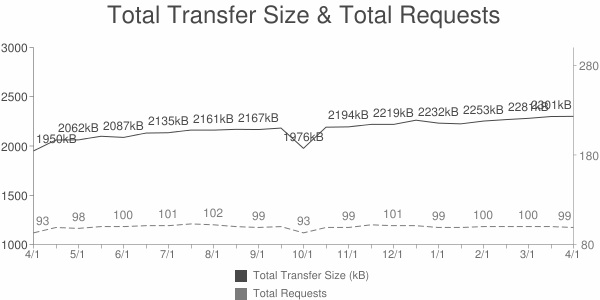

Okay. So maybe that was an outlier. In Performance Engineering, we never want to focus too much on averages. Percentiles and trends are things that give us better insight into whether something should be a concern or not.

So let's look at the trend in the past year.

We see that the trend of transfer sizes has been going up in the past year. Websites across the world have increased in size by about 18%. At this rate, if it continues, in 5 years, websites will increase in size by almost 300%! That's crazy!

While I think this is unlikely to happen with the increased importance placed on web performance, it's unbelievable to think we're increasing at this rate.

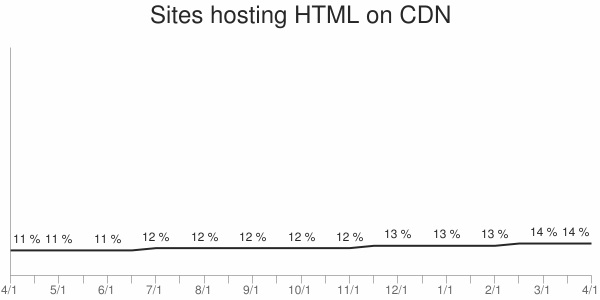

In my last blog, I mentioned how important it is to reduce latency. One of the ways to do that is to implement a content delivery network.

So let's see how that's going across the world.

We can see that only about 14% of websites have implemented a Content Delivery Network (CDN). With free CDNs out there, everyone should be using a CDN.

It's also encouraging that we're trending upward on that one.

Now that we get a sense of how websites are doing with HTTP requests across the globe, I want to look at the the protocol itself. If website operators are only slowly making some improvements, what can be done with the protocol itself to help?

Read Web Performance and the Impact of SPDY, HTTP/2 & QUIC - Part 2, covering the limitations of HTTP/1.0 and HTTP/1.1.