We have seen Application Programming Interfaces (APIs) emerge as a new engine for digital business transformation, tying into many parts of organization including new product innovation and the prospect of new partnership integration. In creating effective API-centric architectures, companies are breaking free from the tedium of monolithic design so that different teams can work independently to quickly produce new offerings to keep pace with market innovation. And, as APIs are increasingly published so that applications can easily make processes available to other applications, there's a world of new opportunity open to every business with the appetite and ability to capitalize on the API economy.

According to Gartner VP and distinguished analyst Kristin R. Moyer, "The API economy is an enabler for turning a business or organization into a platform." It's a positive message for platform-based businesses that are experiencing accelerated digital transformation fueled by successful API management, but it's one that should also come with a warning: given APIs' essential role in driving innovation, there is should be more focus than ever on ensuring APIs are tested properly so that they deploy and function properly.

This blog will take a look at end users – the people waiting for your websites or applications to load, and the effects insufficient testing may have in terms of site abandonment, loss of brand loyalty, and turning to a competitor's solution.

Digital Desertion: Consumer Survey Results

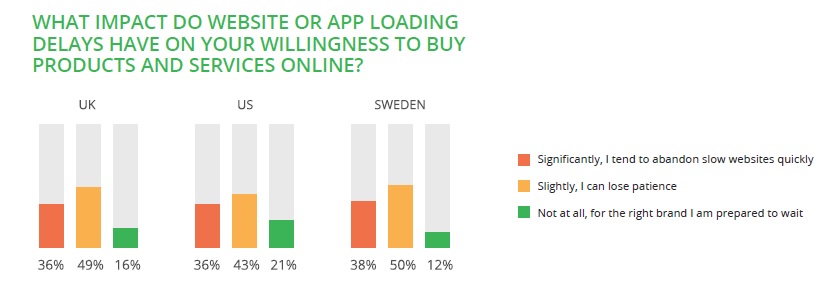

Now more than ever, every second counts in the online world. Slow rendering time and digital disappointment will often drive consumers to a competitor's offerings. Apica recently conducted a survey with research agency 3Gem in an effort to better understand consumers and how they are interacting with websites and apps. We surveyed 2,500 web and app users in the US, UK, and Sweden to explore their expectations regarding digital experiences and to uncover exactly what would impact their opinion of brands.

Start with Poor Website and App Performance Results in Digital Desertion to learn more about the survey results.

On average, 83 percent of consumers in all markets are affected negatively by poor website or app performance. While around half of respondents say they lose patience and are somewhat negatively affected by page loading delays, over 35 percent of respondents say they abandon poor performing sites and apps quickly – often abandoning a site in 10 seconds or less. The survey also identified that 75 percent users expect webpages and apps to load faster than they did three years ago.

These alarming statistics are often born out of great web functionality expectations gone wrong. With APIs driving much of the new functionality on today's e-commerce driven sites, thorough testing has become more important than ever before. With API testing you can cover both internal APIs as well as external. The mix is what the user will experience when he or she is trying to complete a transaction.

Done properly, API testing will minimize deployment issues and catch performance issues earlier in the development cycle.

Validating APIs can be automated to a large extent and should be performed for every release. The secret lies in performing validation constantly for all code and infrastructure changes. Validating third parties is also a constant headache, as they do not always inform you about changes. You should not of course abandon conventional UI testing; but for performance and uptime, API testing will deliver more bang for the buck.

Done properly, API testing will minimize deployment issues and catch performance issues earlier in the development cycle; this is much easier to fix versus catching issues the day of the release.

Make sure that your test tool/vendor provides support for security tools as well as modularized selection of data. It is a big plus is if the test scripts are reusable for monitoring, ideally updated via API from the build engine.

What Does This Mean for Your Business?

Apica's survey results affirm that consumers are more demanding and less forgiving when it comes to website and app performance, and businesses should heed the warning. With three-quarters of users expecting sites and apps to perform faster than they did three years ago, businesses must recognize that they need to manage the peaks and troughs of online traffic and deliver consistently exceptional customer experiences.

The survey also highlights "Digital Desertion" syndrome; if users are disappointed by their digital experience, they often move over to competitors' websites – leaving your site for one that provides a better digital experience. The revenue and brand impact is further impacted by the new reality that nearly 4 in 10 consumers indicate they would likely share a poor online experience with friends or colleagues.

There is nothing to dispute — negative digital experiences are likely to have an impact on brand reputation and loyalty.

Today, websites and apps are integral as a consumer-facing part of your business. The pressure is on companies to continuously monitor and optimize their online performance to ensure they deliver a digital experience that meets today's user expectations. This means taking a proactive approach in the development lifecycle by conducting comprehensive performance testing aimed at ensuring the user experience in production and optimal monitoring capabilities to respond to issues in production before they impact the user experience. Don't leave your revenue, brand and customer experience to chance.