Start with The PADS Framework for Compliance and Security - Part 1

Below we highlight new regulations in the global securities industry that underscore the risks companies face when they don’t have a good handle on user experience or application performance across the application delivery chain.

Capital Markets: Pressure to Avoid Market Disruption

Global securities markets have become increasingly reliant on technology and automated systems that operate at light speed. But in recent years, these systems have suffered both minor glitches and major outages. They have also been susceptible to cyberattacks, further underscoring their vulnerability.

To ensure the integrity and resilience of IT systems and reduce the severity and frequency of these disruptions, the Securities and Exchange Commission (SEC) adopted Regulation Systems Compliance and Integrity (Regulation SCI) in November 2015. The regulation applies to so-called SCI entities, including national securities exchanges, certain high-volume alternative trading systems, clearing agencies, plan processors and self-regulatory agencies such as the Financial Industry Regulatory Authority (FINRA) and the Municipal Securities Rulemaking Board (MSRB).

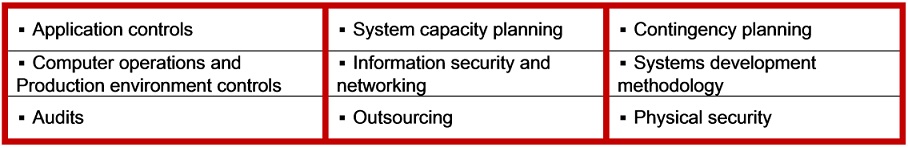

Covered entities must design, develop, test, maintain and monitor their operational systems according to Regulation SCI's standards and best practices. These policies apply to nine IT and security domains:

Source: SEC, Tech-Tonics Advisors

Regulation SCI requires new reporting and disclosure of disruptions, intrusions and other adverse events – with special emphasis on customer personal information. There are also new requirements to notify affected customers and plan participants if the events are "major" or involve "critical SCI systems."

Covered entities must perform ongoing audits and risk assessments. This includes evaluating IT governance services performed by specific entities. Material changes to any “SCI system” – whether existing or planned – must be reported on a quarterly basis. If any covered entity does not implement compliant controls or neglects to report failures to the SEC they could be subject to legal action.

Beyond testing requirements built into business continuity and disaster recovery standards, Regulation SCI also mandates industry-wide coordinated testing to ensure systems-wide functionality and safety. While testing has already begun, the industry has until November 2016 to get processes in place.

Market disruptions have resulted in extreme volatility, fractured investor confidence, catastrophic losses and unprecedented fines for compliance violations. Intelligence across the entire application delivery chain is essential for all covered entities to comply with Regulation SCI.

Conclusion

In the software-defined economy application performance and user experience are critical differentiators to drive business and risk management objectives. The risks of poor application performance and user experience include business interruption, eroding employee engagement and customer satisfaction, regulatory noncompliance and reputational damage.

The underbelly of modern distributed computing environments is growing regulatory oversight pertaining to systems efficacy and security. While regulations are nuanced to specific industries, the connectivity and interdependencies of systems are similar across all sectors. Regulators are increasingly focused on these relationships – and the underlying systems and applications – that comprise application delivery chains.

More companies are incorporating cloud, mobile and social into computing architectures, business plans and processes. With the growth of containers and microservices, coupled with the emerging Internet of Things (IoT), it is imperative for IT teams and senior management to embrace the strategic importance of user experience and application performance to achieve ROI and risk management objectives.