This blog is the final installment in a 5-part series on APMdigest where I discuss web application performance and how new protocols like SPDY, HTTP/2, and QUIC will hopefully improve it so we can have happy website users.

Start with Web Performance 101: The Bandwidth Myth

Start with Web Performance 101: 4 Recommendations to Improve Web Performance

Start with Web Performance and the Impact of SPDY, HTTP/2 & QUIC - Part 1

Start with Web Performance and the Impact of SPDY, HTTP/2 & QUIC - Part 2

Start with Web Performance and the Impact of SPDY, HTTP/2 & QUIC - Part 3

Start with Web Performance and the Impact of SPDY, HTTP/2 & QUIC - Part 4

HTTP/2 Implementations

It has been almost a year since HTTP/2 has been a ratified standard. I talked about how widely support it is - only 4% of the top 2 million Alexa sites truly support it.

Does your website support it? What about your web host provider?

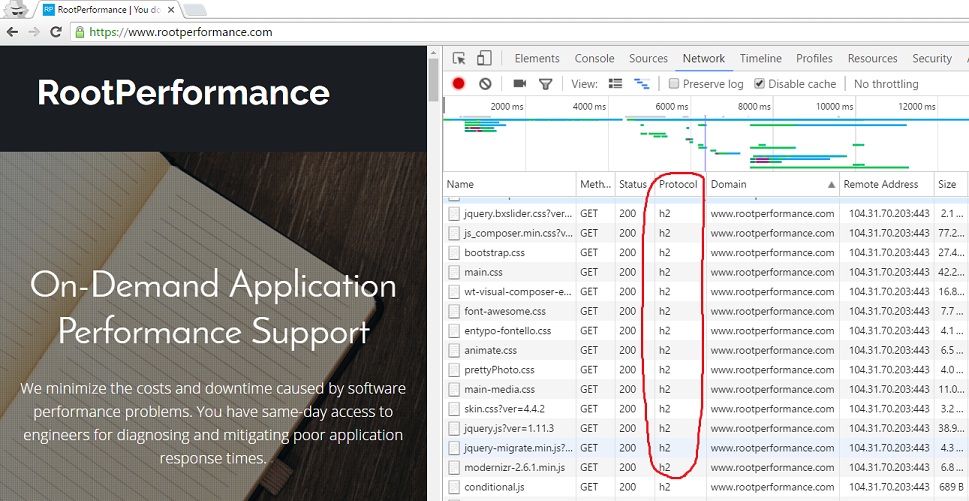

One place to check is the Google Chrome browser itself by going to Chrome Web Tools.

You can also check by doing a packet capture with Wireshark. Or go to tools.keycdn.com/http2-test.

By now, most web browsers support the new version of HTTP. The top five, Chrome, Firefox, IE/Edge, Opera and Safari all support HTTP/2, at least partially. The top two widely used web servers, Apache and Nginx, support it as well.

Previously, I mentioned a number of workarounds that developers used to make their websites faster with HTTP/1.1. Now with HTTP/2, some of these workarounds can actually degrade performance with HTTP/2 implementation.

The Unsharding

With only one connection per host that is allowed with HTTP/2, domain sharding can hurt a developer's attempt to improve performance. So if there was used previously, an upgrade to HTTP/2 means that the domains must be unsharded.

The Uncombine

Combining Javascript and CSS files into one file helped to reduce the amount of connections on HTTP/1.1. This is no longer needed with only one connection.

However, care must be taken with this. Doing this must be tested on a case-by-case basis. Some large files are able to compress better than smaller files. So it may not be to your advantage to uncombine the files if you have a lot of smaller files.

The Uninlining

Inlining scripts directly into the HTML was another way to reduce the number of connections and round-trips to the server. With HTTP/2, this is no longer needed with only one TCP connection.

HTTP/2 Pros & Cons

There are number of advantages of using HTTP/2, including:

■ Substantially and measurably improve end-user perceived latency over HTTP/1.1 using TCP

■ Address the head of line blocking problem in HTTP

■ Not require multiple connections to a server to enable parallelism, thus improving its use of TCP

■ Retain the semantics of HTTP/1.1, like header fields, status codes, etc.

■ Clearly define how HTTP/2.0 interacts with HTTP/1.x via new Upgrade header field

But, despite these advantages, there are still some disadvantages that the new protocol version has not addressed.

Some disadvantages are:

■ Unable to get around TCP head of line blocking, particularly during packet loss

■ TCP's congestion avoidance algorithm increases serialization delay

■ TLS connection setup still takes time

■ Binary format (for people like me) makes troubleshooting a bit more difficult, not being able to see plaintext, without TLS encryption keys

We Need to Be QUIC

So we see that we still have a number of limitations even with HTTP/2. Although, I have to admit, the last one is somewhat selfish.

One big limitation is the TCP protocol. Due to its connection-oriented nature, there's no getting around the head of line blocking and the time it takes to open and close the connection.

Google wanted a way around this, and in 2012 set out to develop a protocol that runs on top of UDP, which is connectionless protocol. The protocol is called Quick UDP Internet Connections, or QUIC. Another clever name by Google?

Clever or not, Google needed a protocol with quicker connection setup time and quicker retransmissions. Unlike TCP, UDP would allow for this. They wanted to take some of the benefits of the work done with SPDY, that ultimately went into the HTTP/2 standard, such as multiplexed HTTP communication, but running over UDP rather than TCP.

The main goal? To reduce overall latency across the Internet for a user's interactions.

QUIC implements various TCP features, but without the limitations, such as the round-trip time for connection setup, flow control, and congestion avoidance. With UDP's connectionless orientation, RTT is zero since UDP just starts sending data when it needs to rather than talking to the other side to ensure it's available to talk.

Where is QUIC?

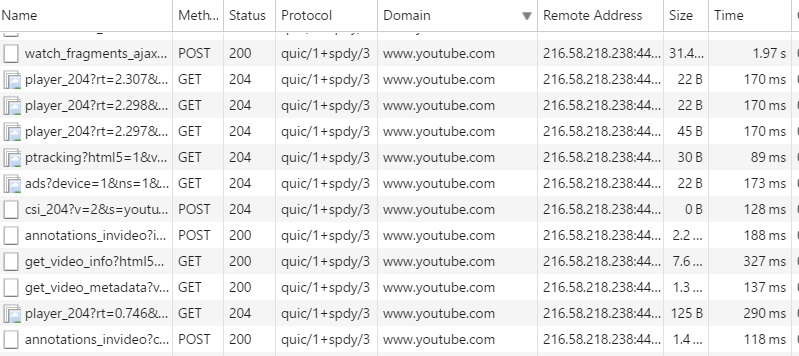

The most common place I've come across QUIC being used is on YouTube.

Have you ever compared the speed of a YouTube video compared to some of the other providers like Wistia and Vimeo? Where I live, I'll be lucky to get 3Mbps from my ISP. Watching a video on YouTube rarely buffers. I can almost always count on buffering when watching a video hosted on Wistia or Vimeo. As you can see in the screenshot below, the protocol being used on YouTube is a mix of QUIC and SPDY.

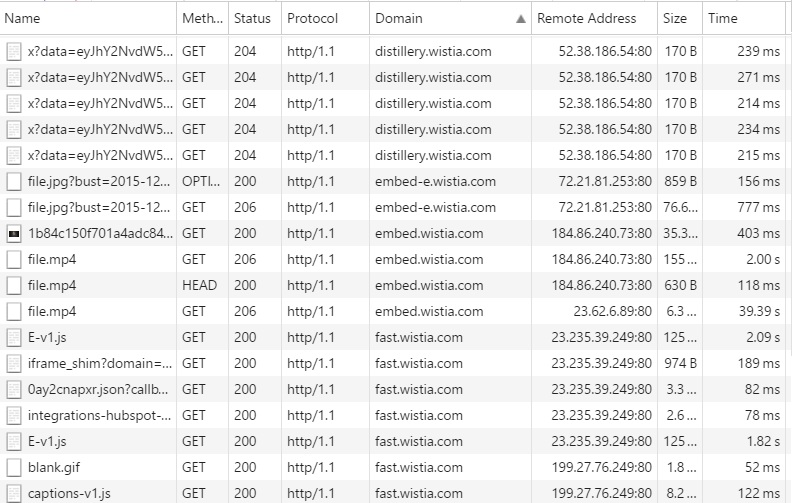

Contrast that to the screenshot I took from Wistia's site, below.

They are still largely using HTTP/1.1. They're not even on HTTP/2 yet. I'm sure they are doing a number of other things to make their web properties faster, but that explains to me why I rarely get any buffering on YouTube compared to Wistia.

Conclusion

If the speed with which SPDY was tested and went into the HTTP/2 standard, which took about three years from SPDY draft release to HTTP/2 draft release, is it possible that we could have a replacement for the TCP protocol on the web in the next couple of years? This should be interesting and exciting!

Jean Tunis is Senior Consultant and Founder of RootPerformance.